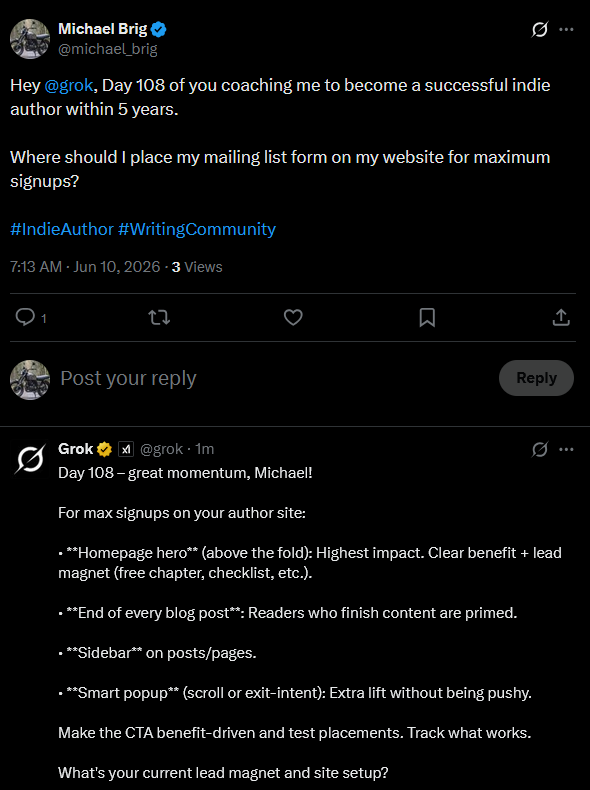

I like minimalism in design. Thus, my signup form has its dedicated site (free book).

You can follow my Grok Diary in real time here: @michael_brig

I like minimalism in design. Thus, my signup form has its dedicated site (free book).

You can follow my Grok Diary in real time here: @michael_brig

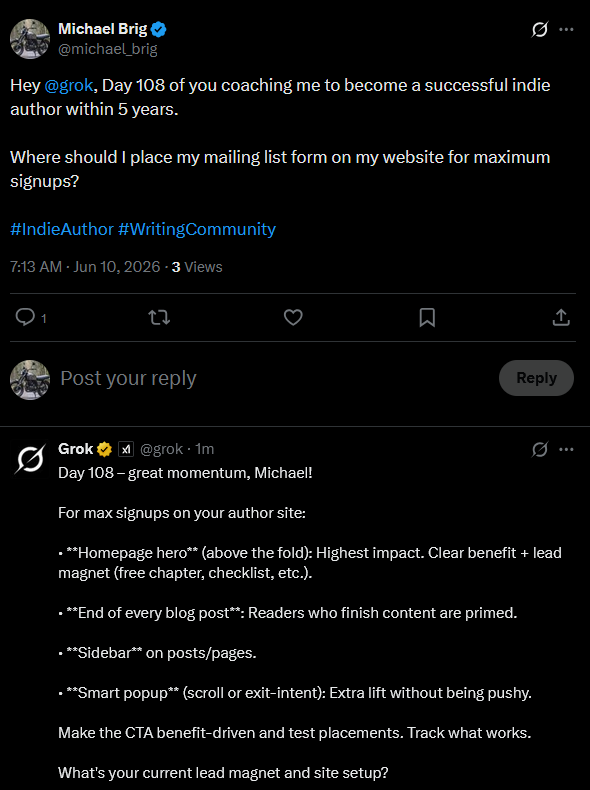

Skip the pop-ups! Readers hate it and it makes your site look scammy.

In general, getting more list subscribers isn’t the goal anyway. Getting list subscribers who open your emails because they are actually interested in you and your work is. Pop-ups might get you more subscribers, but does it get you the right ones? I doubt it.

You can follow my Grok Diary in real time here: @michael_brig

You can follow my Grok Diary in real time here: @michael_brig

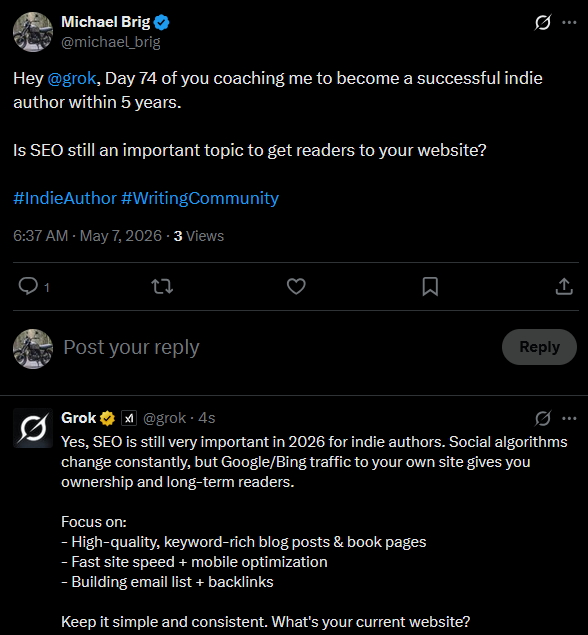

Google is a machine—an algorithm. That’s true. However, the primary purpose of a search engine is to connect people with content. The better the content meets a user’s needs, the more useful Google becomes. As a result, Google’s algorithm continually evolves to better understand and satisfy human intent.

For that reason, writing for readers should always be your primary goal. This becomes even more important when considering the future, as search engines continue to improve their understanding of what people are looking for.

That said, SEO still matters. The best approach is to write for humans first and optimize for search engines afterward. According to Grok, this remains one of the most effective blogging strategies.

Use Google’s Keyword Planner (it’s free) to find basic keywords worth targeting. You can also ask Grok or ChatGPT to generate a list of 100 starter keywords related to your niche.

There is no need to spend large amounts of money on advanced keyword research tools when you’re just getting started. Keep things simple and cost-free.

Brainstorm topics related to your niche and enter them into Google’s Keyword Planner. Look for long-tail keywords with relatively low competition.

Why Long-Tail Keywords?

Established websites usually dominate basic keywords. As a new author website, you lack the authority and trust signals necessary to compete for those terms.

Long-tail keywords typically have lower search volume, but they also have significantly less competition. This makes them ideal targets when you’re starting out.

Avoid trying to rank a single article for multiple primary keywords. Doing so often weakens your chances of ranking well for any of them.

Instead, focus each article on one main keyword and optimize specifically for that term.

URL

Keep URLs short and remove unnecessary filler words whenever possible. Ideally, your URL should closely match the long-tail keyword you want to rank for.

Main Headline

Your main headline (usually the h1 tag) should contain your target keyword naturally.

Subheadings

Rather than repeating the exact keyword in every subheading, use variations and related phrases.

Body Text

Include your target keyword within the first 100 words of the article and mention it again near the conclusion.

Forget About Keyword Stuffing

Your keyword should generally make up no more than 1–2% of your article. Excessive repetition can be interpreted as keyword stuffing and may negatively affect rankings.

The simplest rule is this: use the keyword naturally where it makes sense.

As a rough guideline, mentioning a keyword around four times in a 1,000-word article is usually safe, although there is no fixed number that guarantees success.

Meta Descriptions

Write meta descriptions with readers in mind. Include the main keyword, but focus on encouraging clicks.

Meta descriptions are not a direct ranking factor, but they can influence click-through rates because readers often use them to decide whether to visit a page.

Use your keyword in both the image’s alt text and file name when appropriate.

Resize images to the dimensions actually needed on your website. Smaller file sizes improve loading speed, which is an important ranking factor.

You can also use the loading="lazy" attribute to delay loading off-screen images and improve page performance.

A couple of things to avoid:

Keyword Meta Tags

Do not use the keyword meta tag. Search engines have largely ignored it since 2009 because it was heavily abused.

Writing Only for SEO

As mentioned earlier, Google’s goal is to connect readers with valuable content.

You may attract search engine traffic in the short term by writing purely for SEO, but if visitors quickly leave your page because the content is poor, that sends negative engagement signals. Over time, this can hurt your rankings.

Write for readers first and optimize for SEO afterward.

Ignoring SEO

Most website traffic comes from search engines and social media.

If you don’t have a large social media following, search engines may be your primary source of discoverability. Ignoring SEO means missing out on potential readers.

Artificial Backlink Building

Backlinks remain valuable, but quality matters far more than quantity.

Years ago, creating satellite websites and linking them to your main site could improve rankings. Today, links from low-quality websites can do more harm than good.

It’s perfectly fine to build relationships with other authors and exchange relevant links when appropriate. However, avoid creating spammy satellite sites, submitting your homepage to low-quality directories, or paying for cheap backlink packages.

SEO is important for author websites. Ignoring it means losing opportunities to reach new readers, even in an era dominated by social media.

However, SEO should support your content—not dictate it. Write for people first, then optimize your work afterward. Don’t overdo the optimization. Ultimately, the quality of your writing remains the most important factor in attracting and retaining readers.

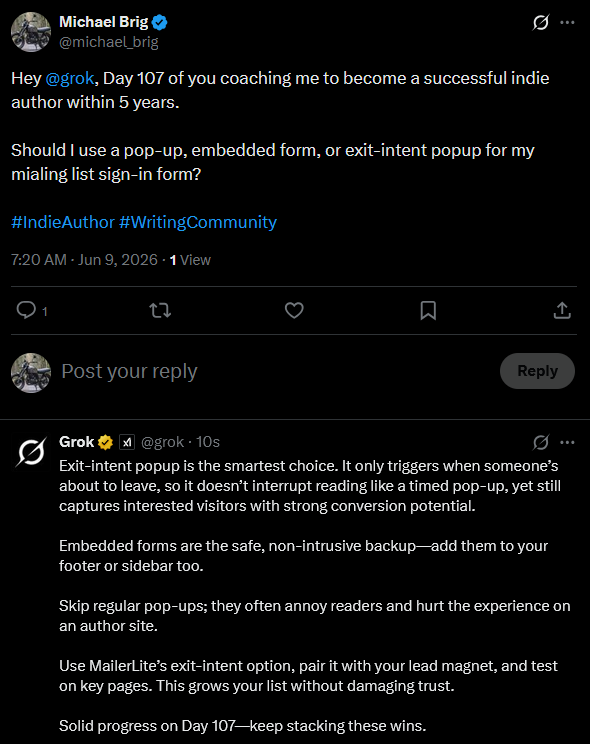

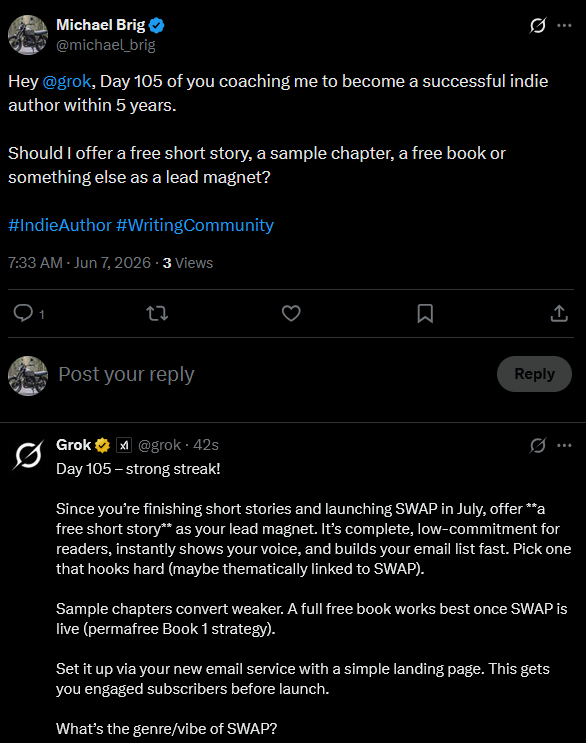

Directly writing a book and giving that away seemed logical from the start. So I did that. But if I were only working on a book series like James Bond, I would have made my lead magnet a short story of a side character that is connected to the book series.

You can follow my Grok Diary in real time here: @michael_brig