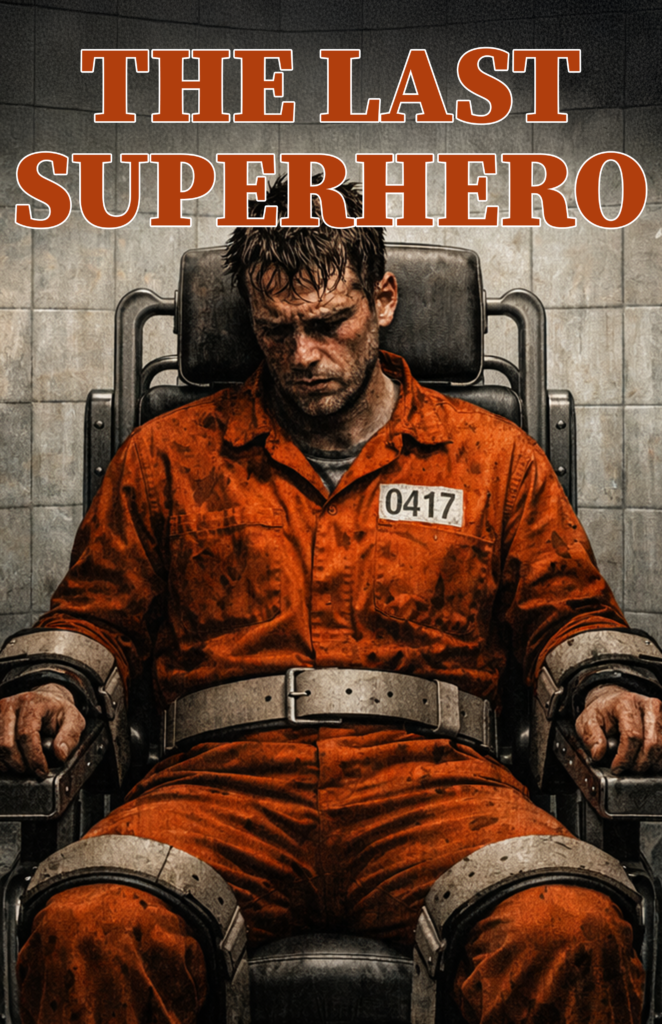

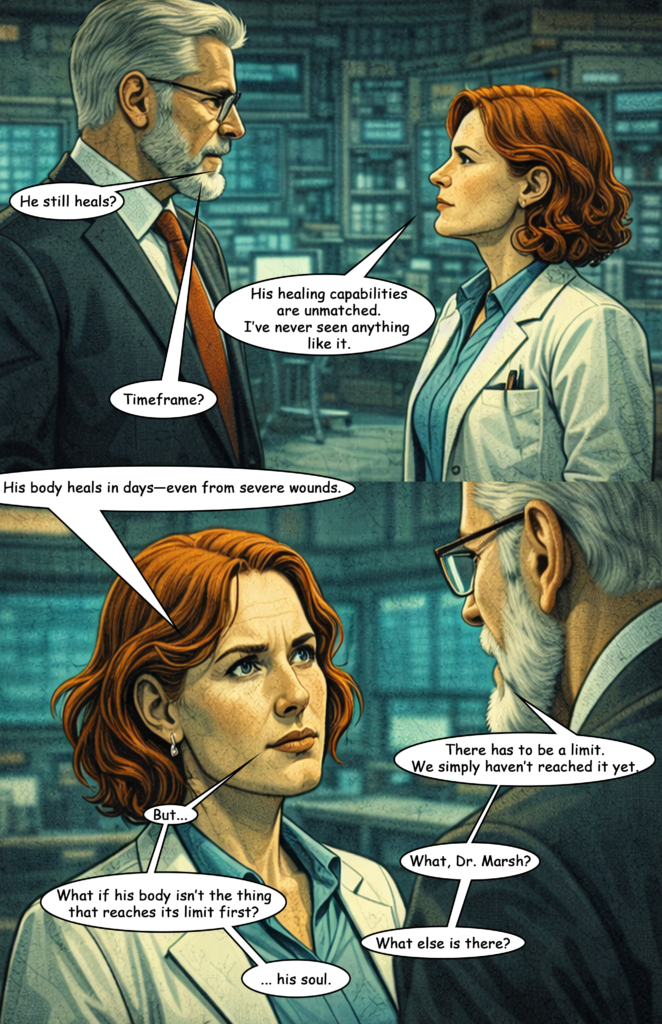

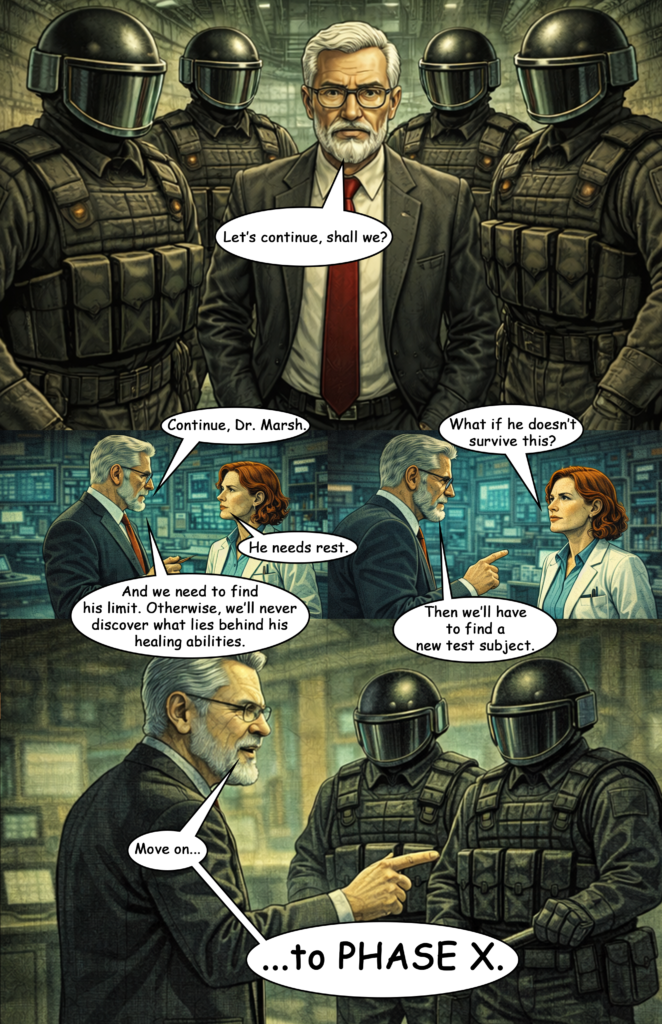

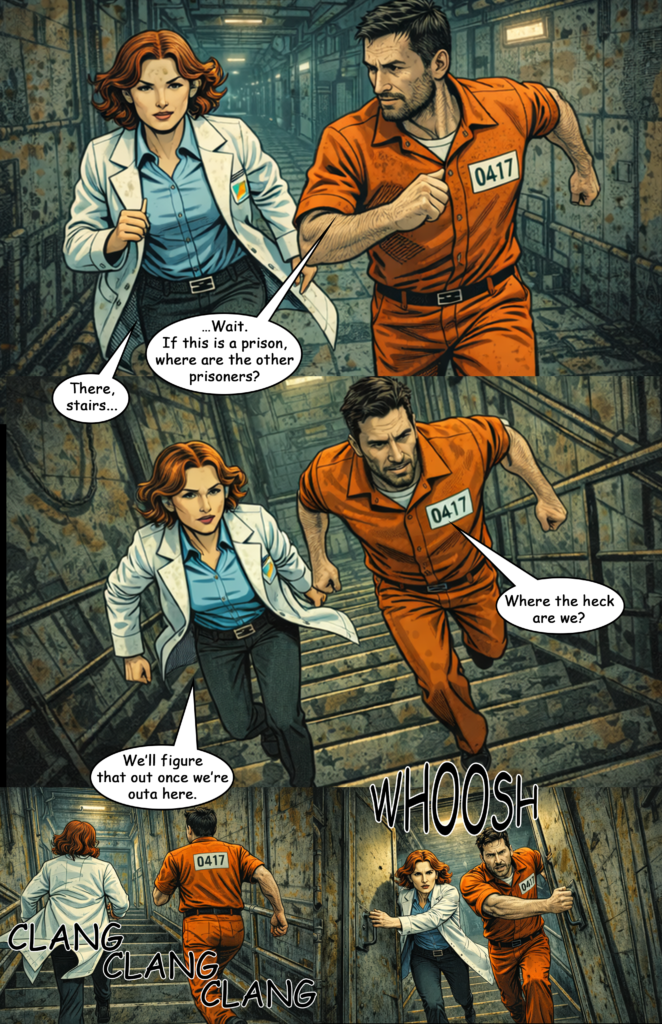

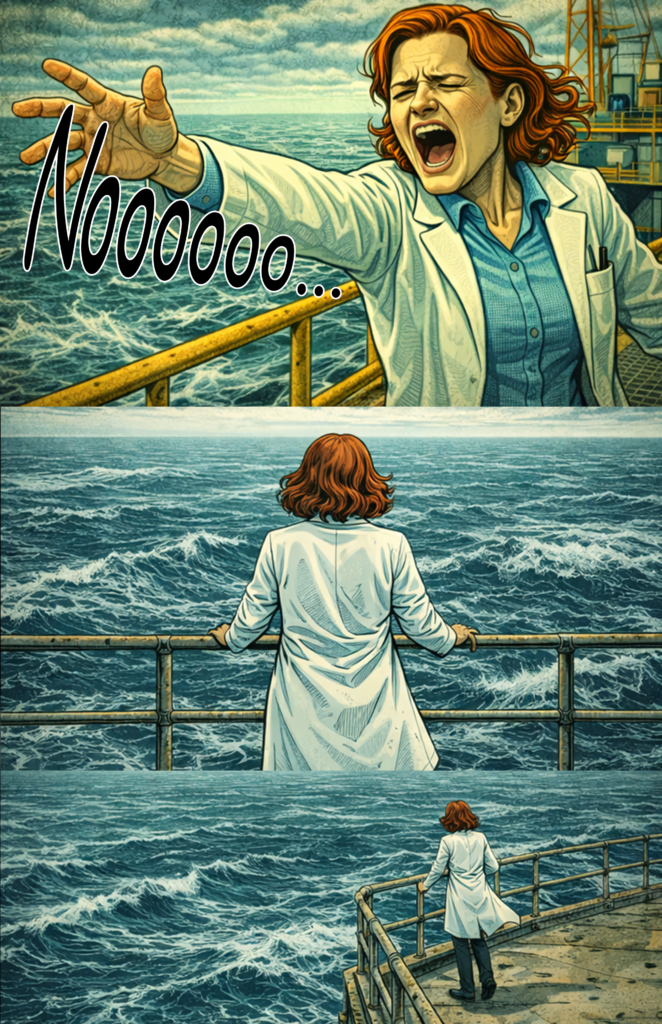

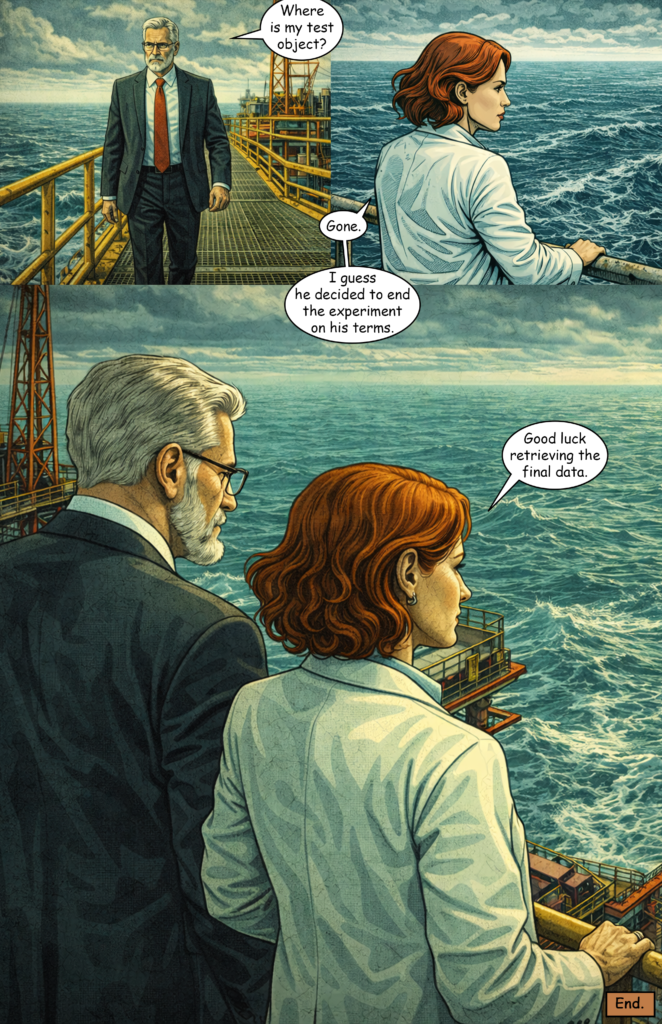

My first attempt with ChatGPT (Version 5) is finished — The Last Superhero Part 3.

Right now, I’d say the result is maybe 20% of what I would like it to be. I plan to create Part 4 in the next couple of weeks and try to improve the results, as there is still some room for experimentation.

For now, here are the biggest problems I encountered.

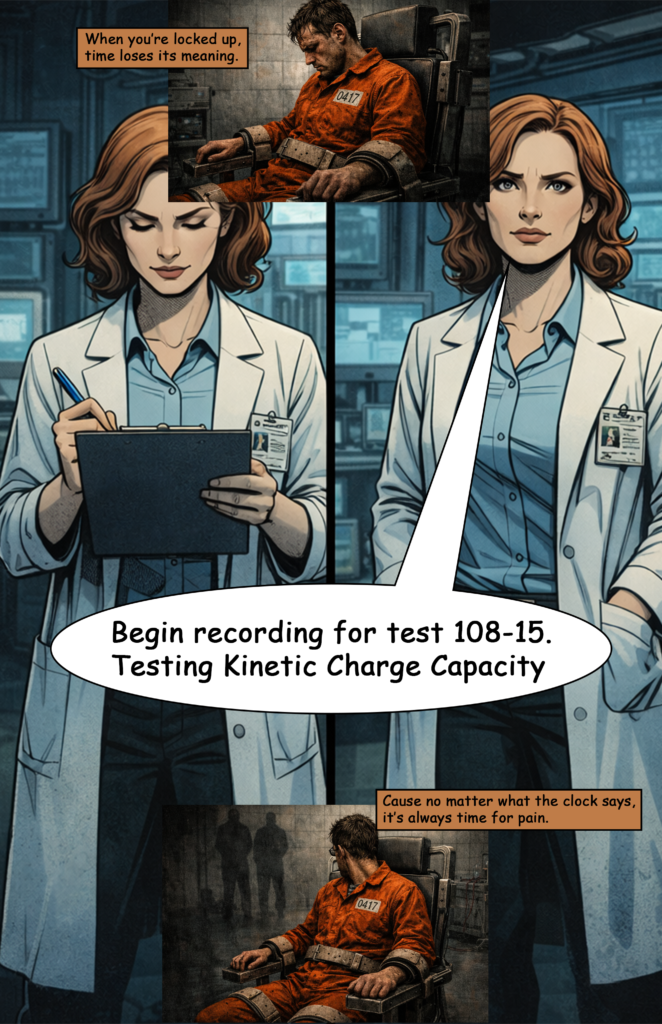

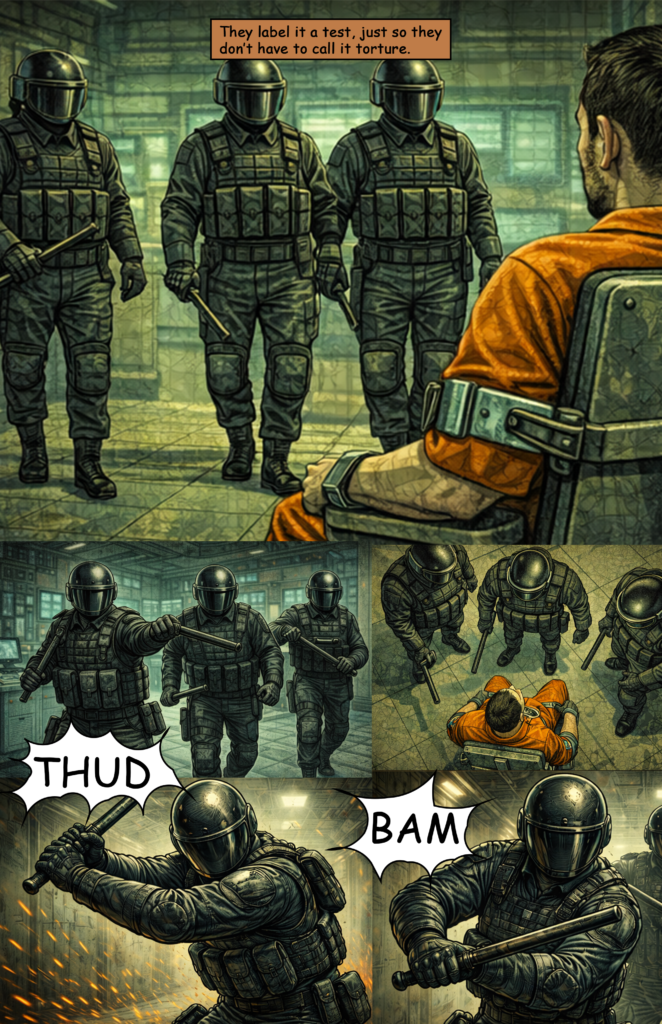

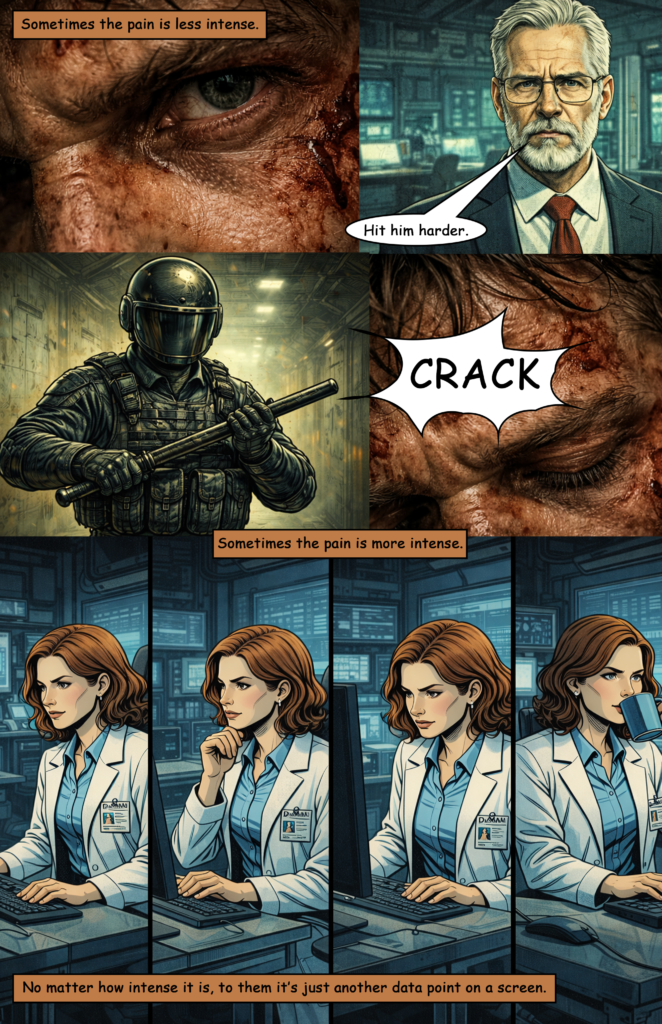

Language Filter

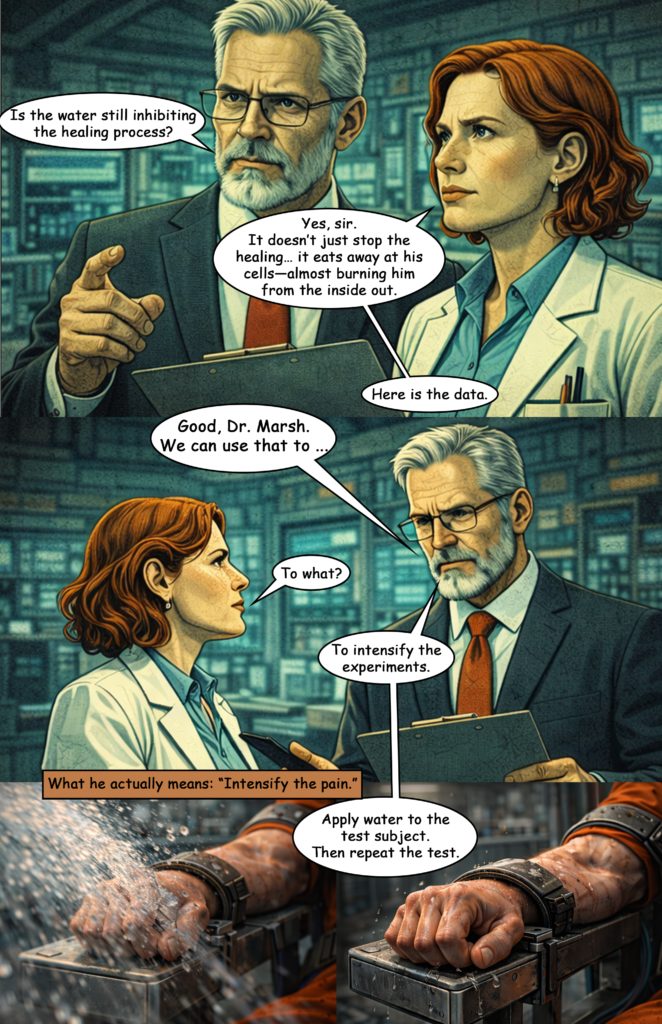

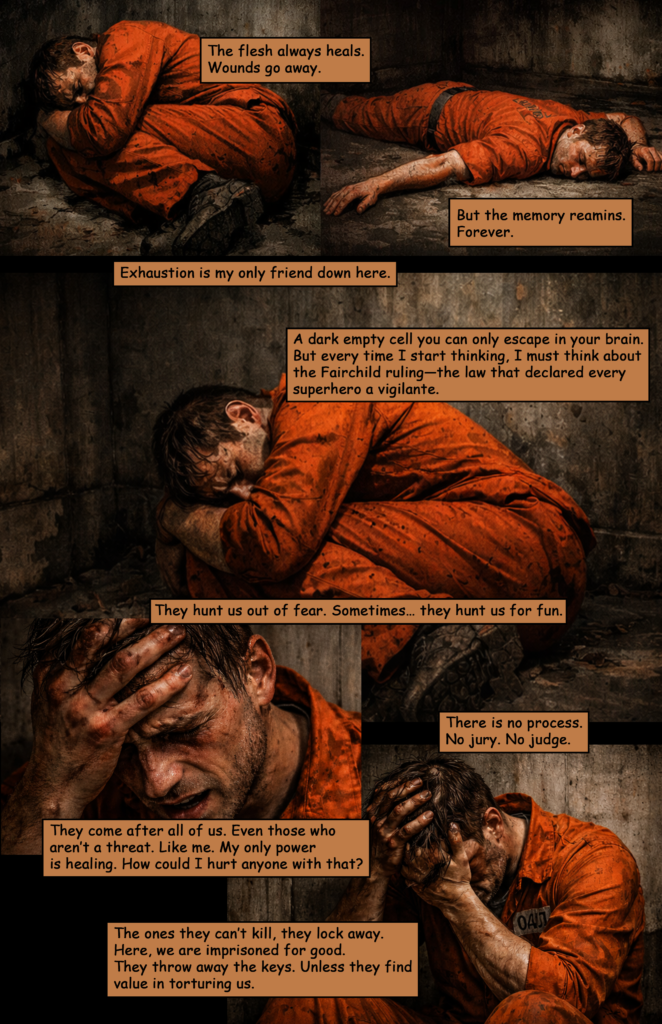

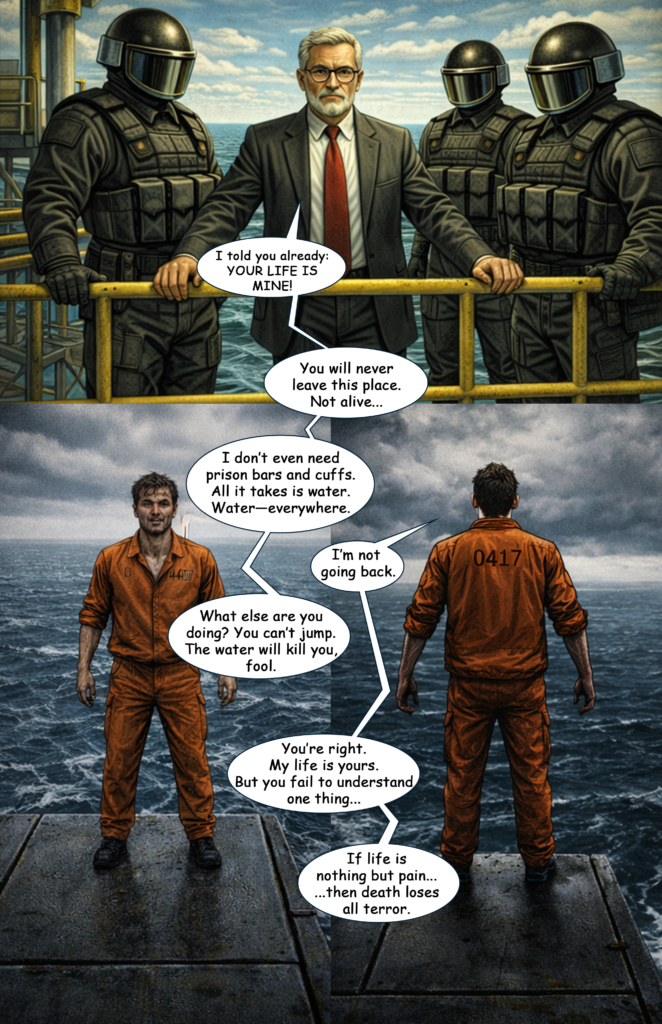

Just like Midjourney, ChatGPT has a very strict language filter for image prompts. For text generation, you can tell ChatGPT that you’re working in a fictional setting. That allows you to describe certain acts of violence or crime to some degree.

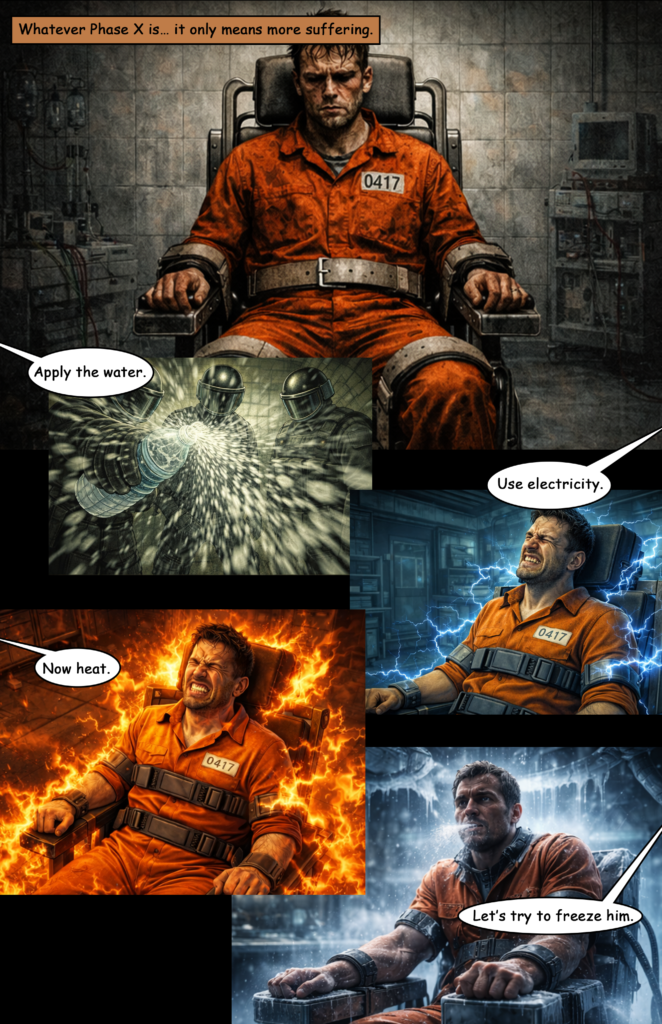

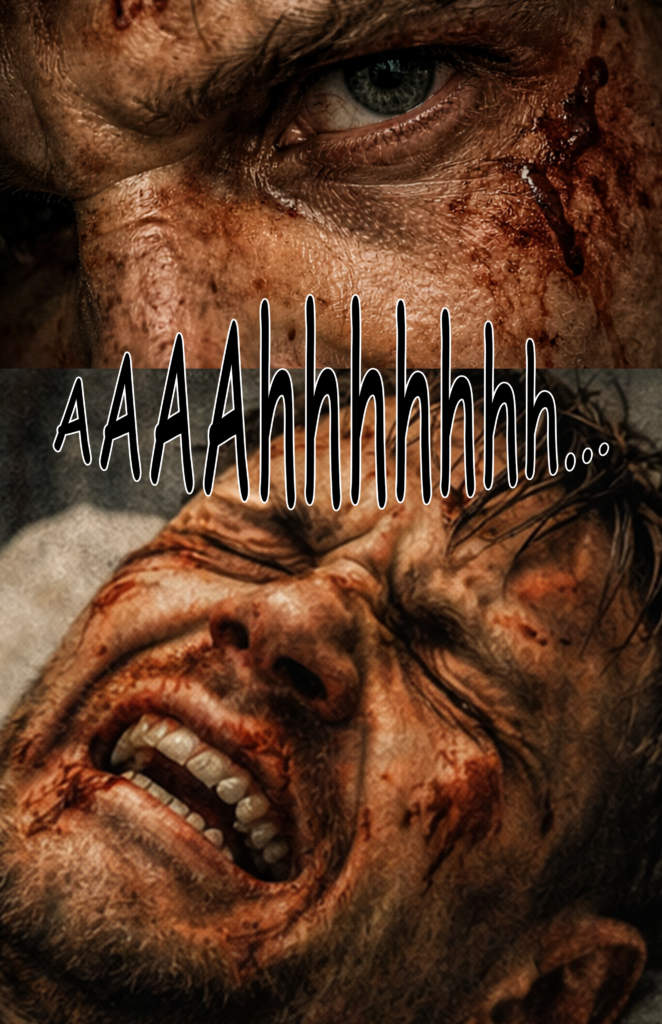

With image generation, however, this isn’t possible at all. Even hinting at violence in a comic-book context can trigger the filter.

For example, I had problems generating an image where a character gets water splashed onto his face. That alone triggered the system.

The same happens with facial expressions. Pain alone might work, but pain combined with bruises often gets flagged — even without describing the action that caused them.

Time Limits for Image Generation

Don’t even try using the free version.

You might only get two or three images every couple of hours. For my 31-page comic, I generated more than 120 images.

Even the paid version has timeouts. After roughly every 20 images, ChatGPT asked me to wait a couple of hours before I could continue generating more.

Midjourney handles this much better — especially considering that the prices are somewhat comparable.

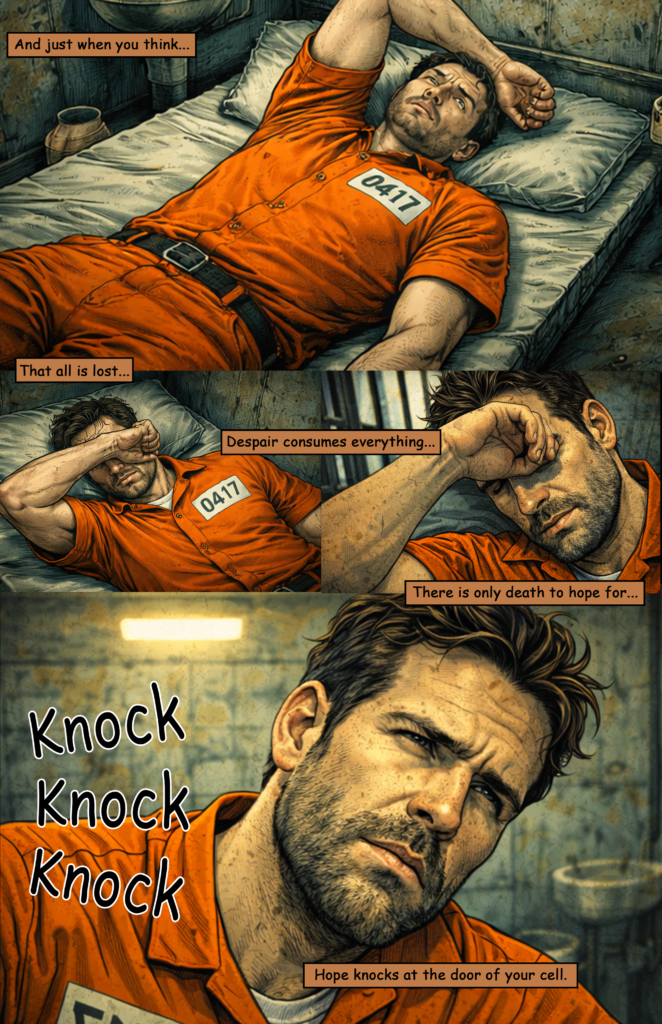

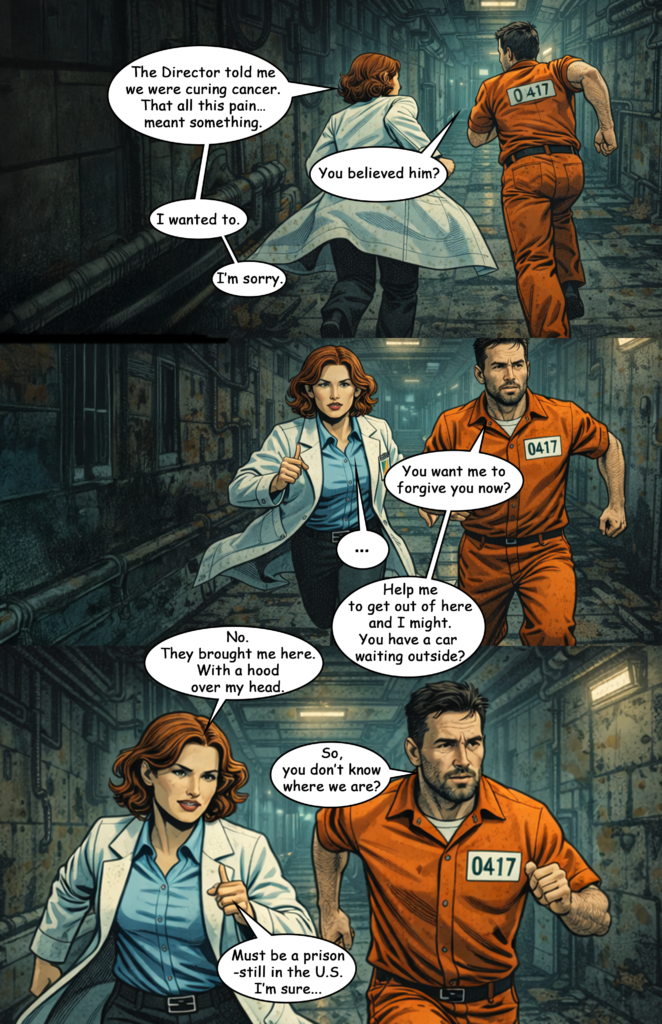

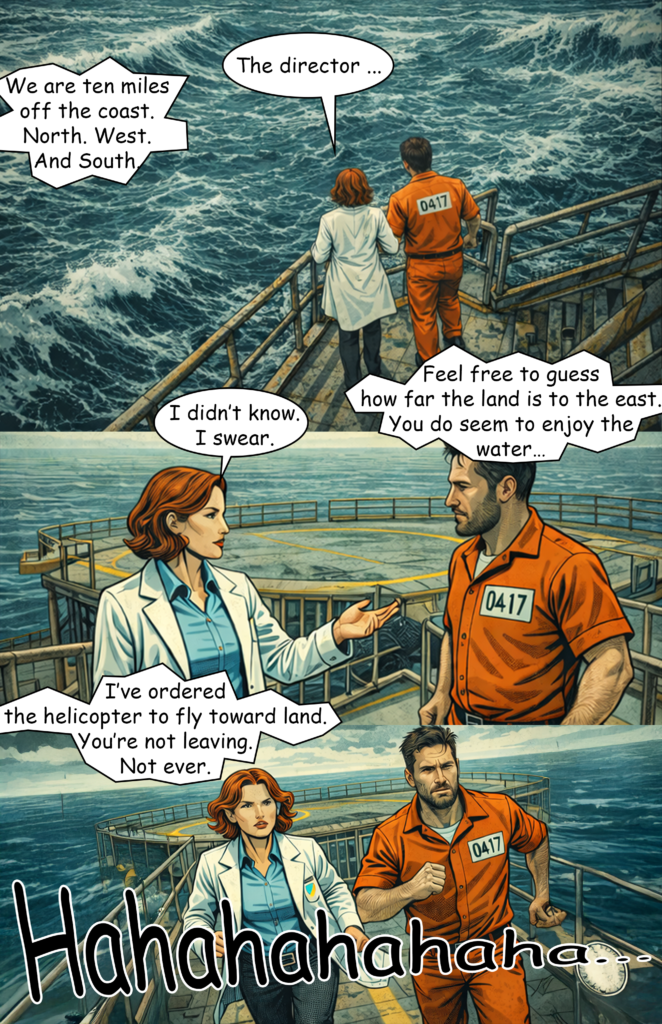

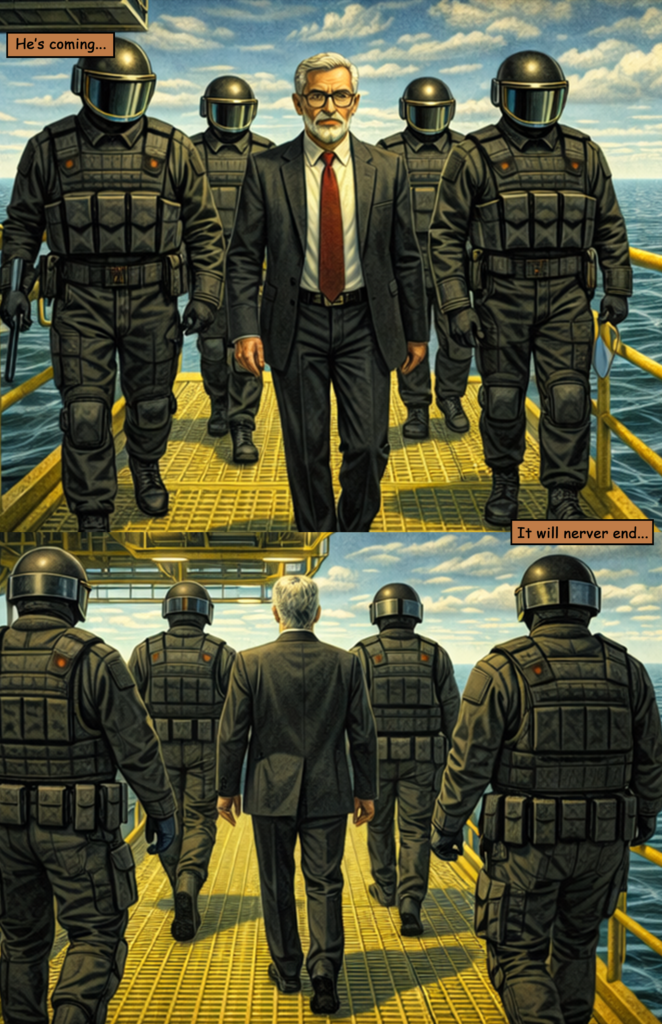

Style Drift

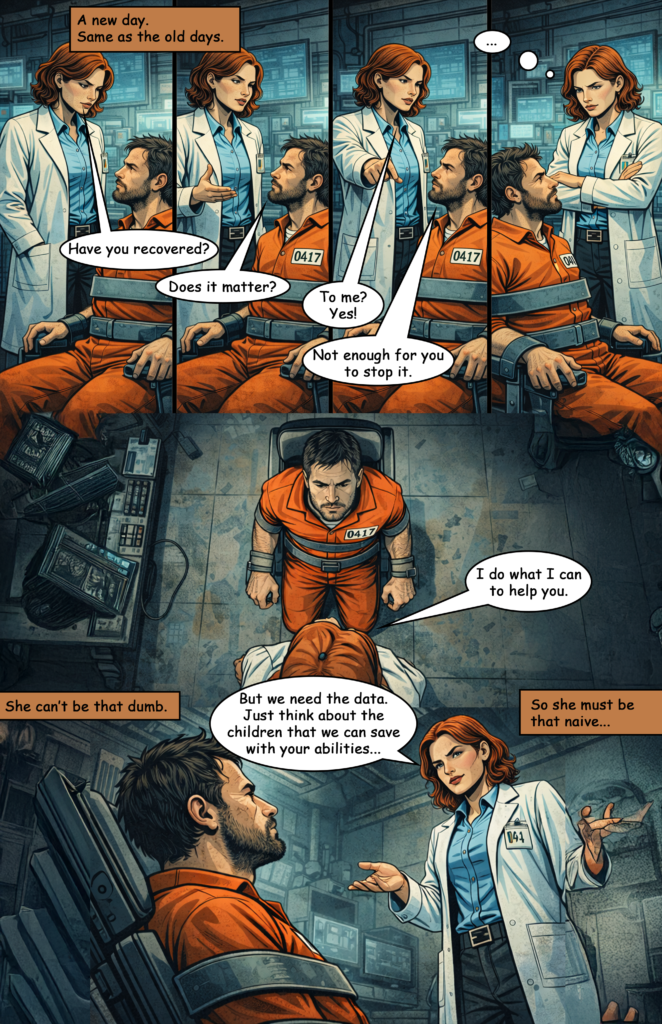

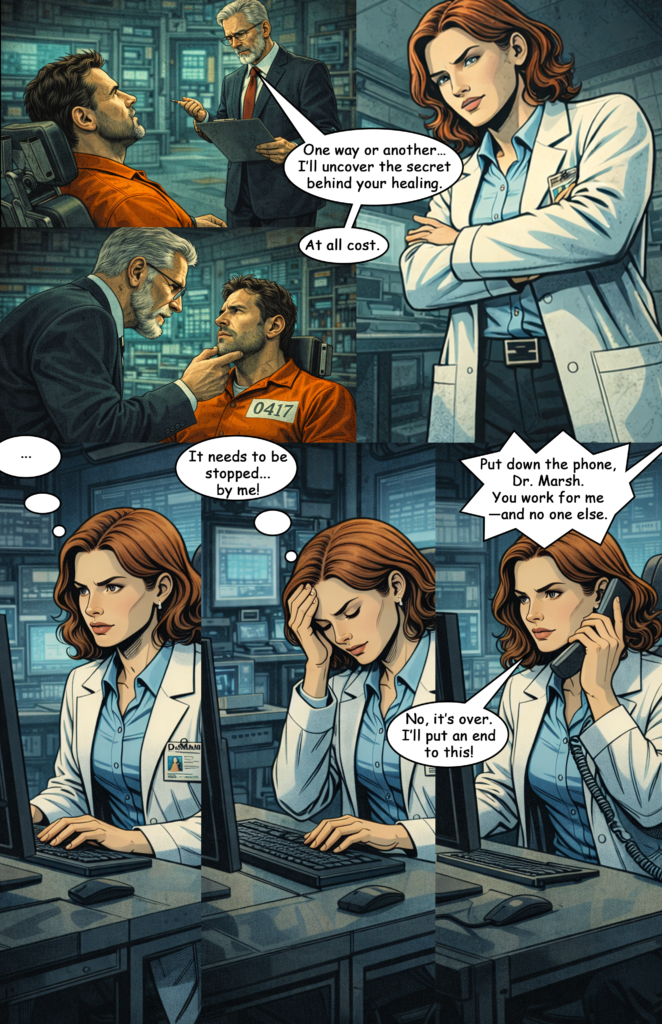

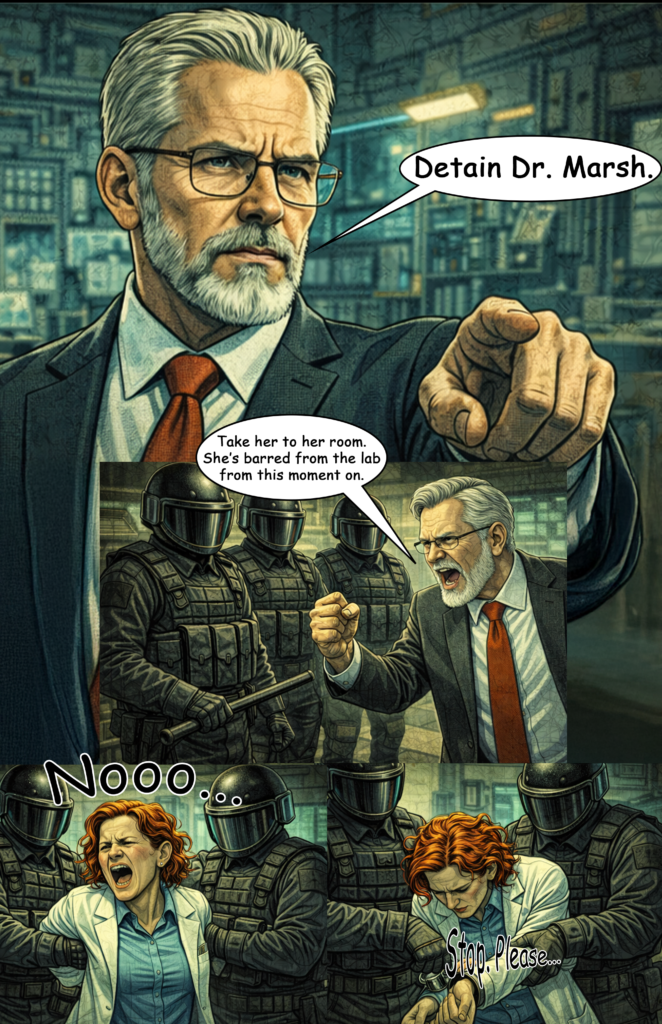

You can clearly see how the comic switches between different art styles. I tried to anchor the prompts around a specific comic-book artist, but every few images the style drifted again.

Prompt “Fading”

I’ve seen this with Midjourney as well. When prompts become too long, parts of them seem to fade away and become irrelevant. The AI then simply ignores those sections.

Character Consistency

Clothing and the general appearance are mostly fine, but the face of my protagonist drifted quite a lot.

Character consistency remains one of the biggest issues, especially if you attempt to create something larger like a 160-page comic.

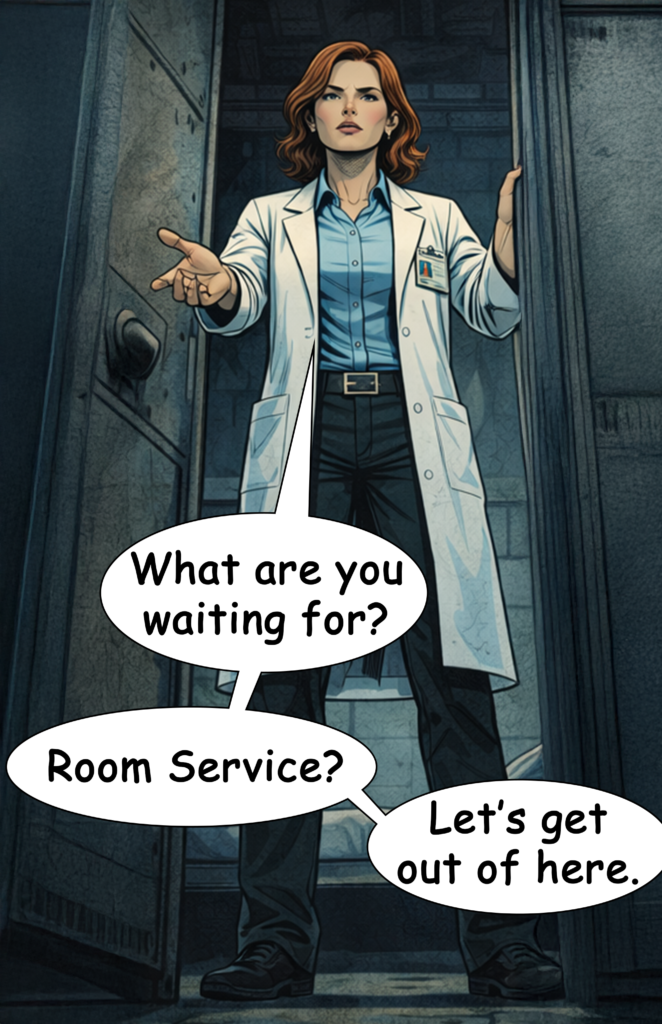

Facial Details

Facial details are very difficult to control. My character’s beard looks slightly different in almost every image, and the hairstyle of the female doctor changes frequently as well.

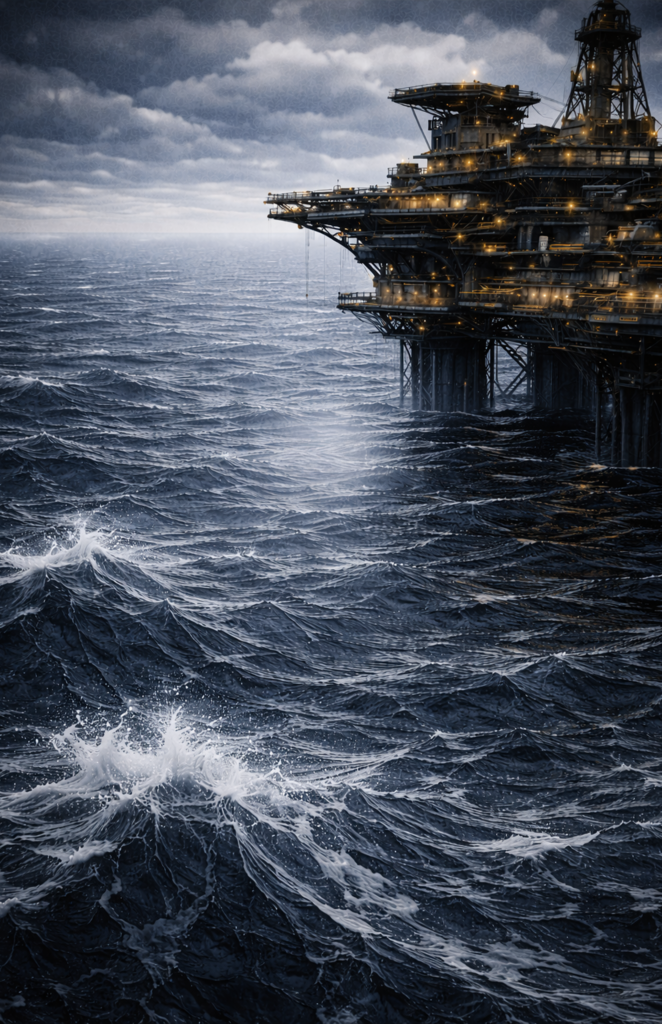

Environment Consistency

This is similar to the character consistency problem. The more detailed the environment, the harder it becomes to keep it consistent across multiple images.

Chats Become Clunky and Glitchy

It helps to generate all images within the same chat, but once the conversation reaches around 20 prompts, things start to slow down. The chat becomes sluggish and sometimes even glitches.

User Experience

Overall, Midjourney still offers a better user experience. It’s easier to fine-tune prompts, results arrive faster, and the whole process feels more controlled.

Conclusion

There are quite a few issues. I think some of them can be improved with better prompting and a couple of workarounds.

For now, I would still recommend Midjourney for AI comics. That said, with a few adjustments I might be able to get better results with ChatGPT when creating Part 4 of The Last Superhero.