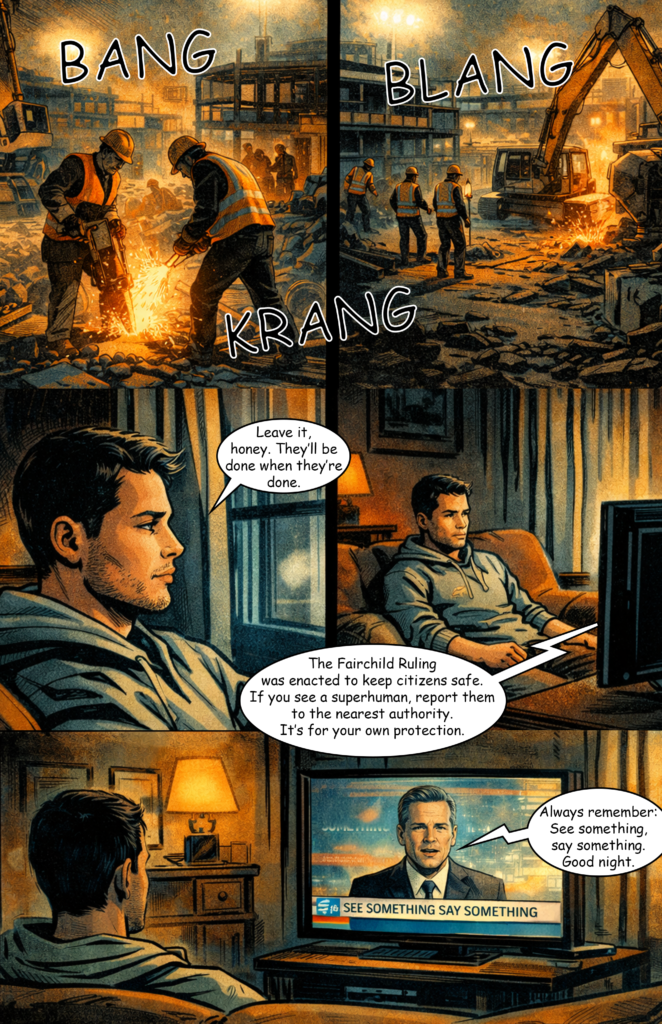

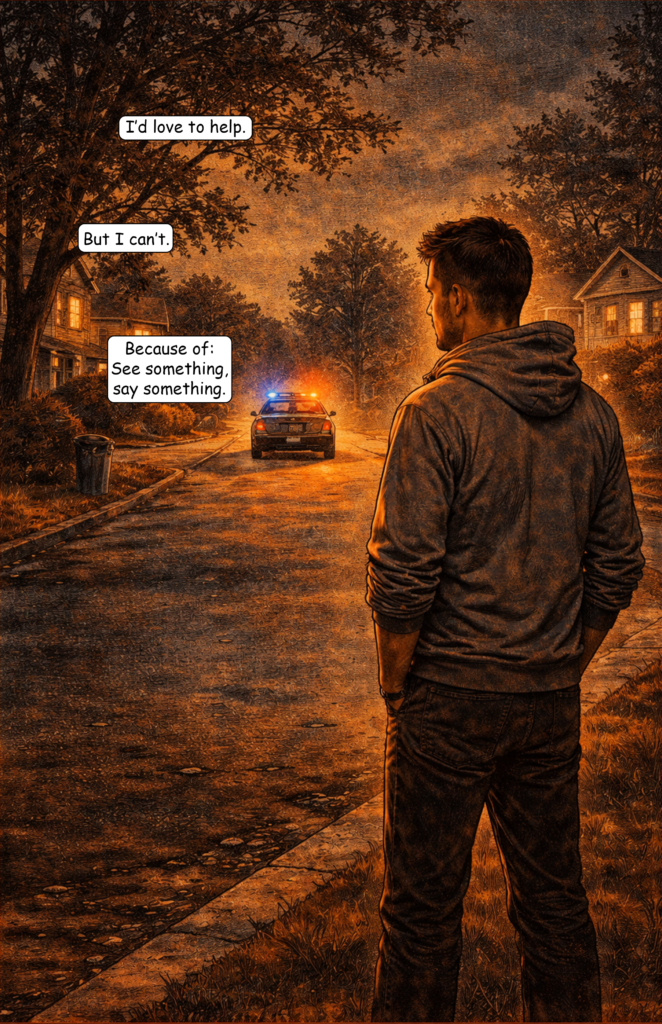

I got back into creating AI comics this year, starting with tests using ChatGPT’s latest image model, V5. You can read both generated comic stories here:

Part 3 was mainly a first test to see what the image model is capable of. In Part 4, I applied what I learned and aimed for better results. I’d say Part 3 delivered about 20% of what I had in mind, while Part 4 got closer to 60%.

What I Did to Improve the Results

Time Limits

There is a daily limit on how many images you can generate, even with a paid subscription. The free version gives you fewer than a handful of generations a day, making it unusable for a project like this.

To work around this, I focused on one or two pages per day and generated only the images needed for those pages. This way, I never hit the limit before finishing my daily work.

Generate More Images Per Prompt

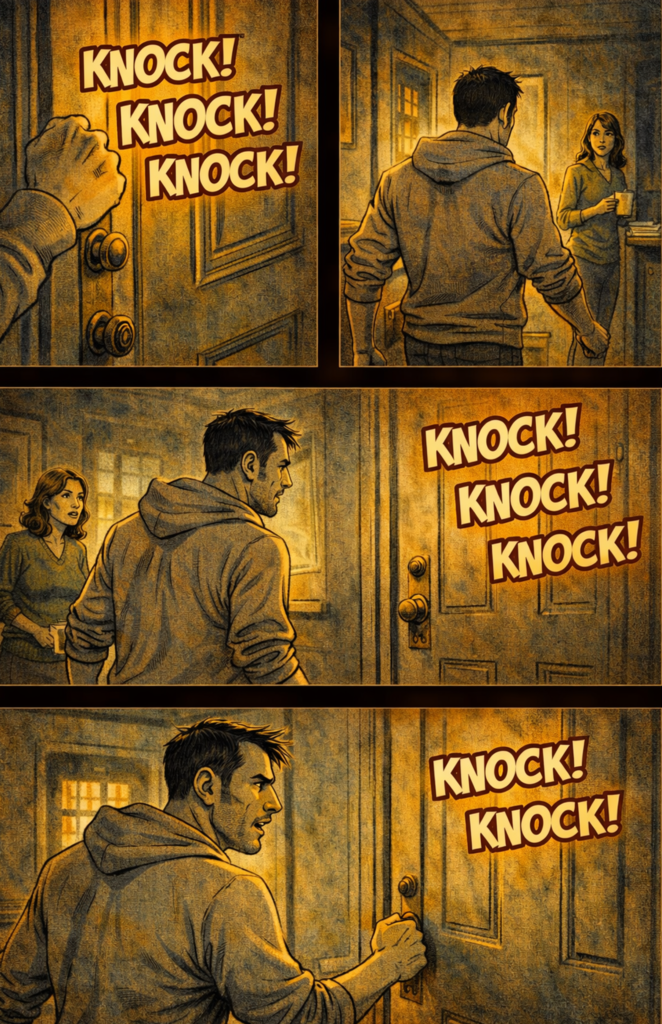

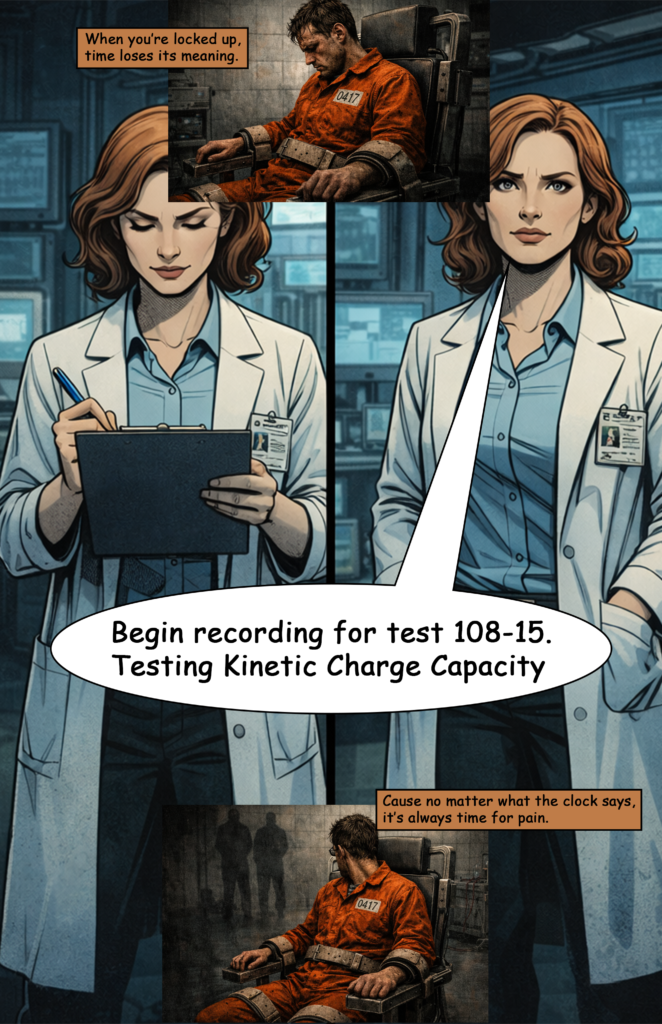

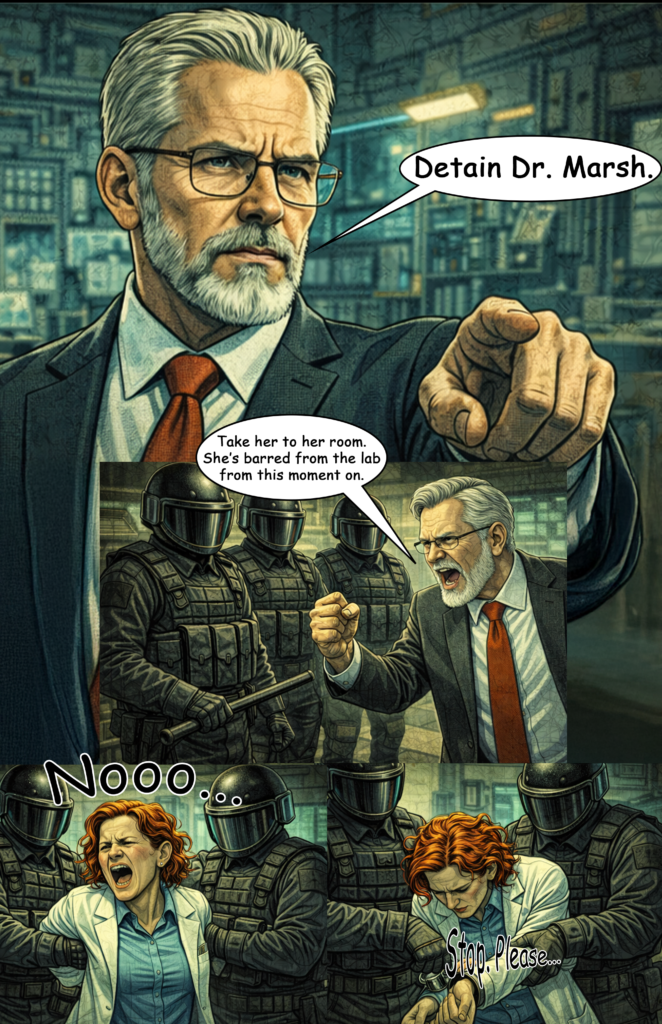

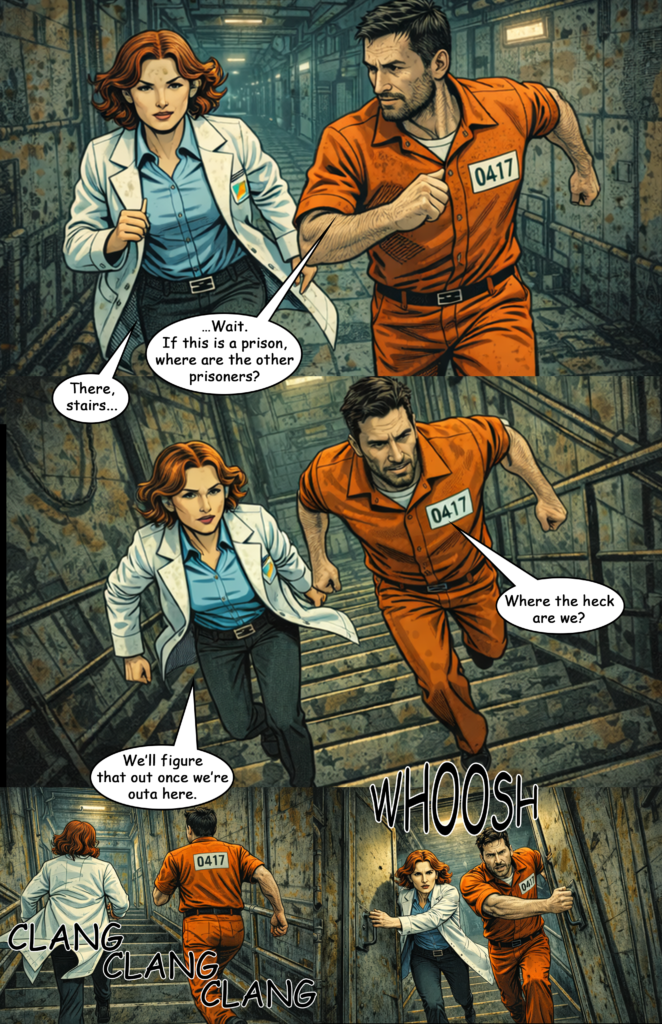

I recommend adding token like “comic page layout” or “4 images in one with different perspectives” to every prompt. This gives you multiple images in a single generation.

Yes, the resolution drops, but if that matters to you, upscale the images afterwards.

Use Master Prompts

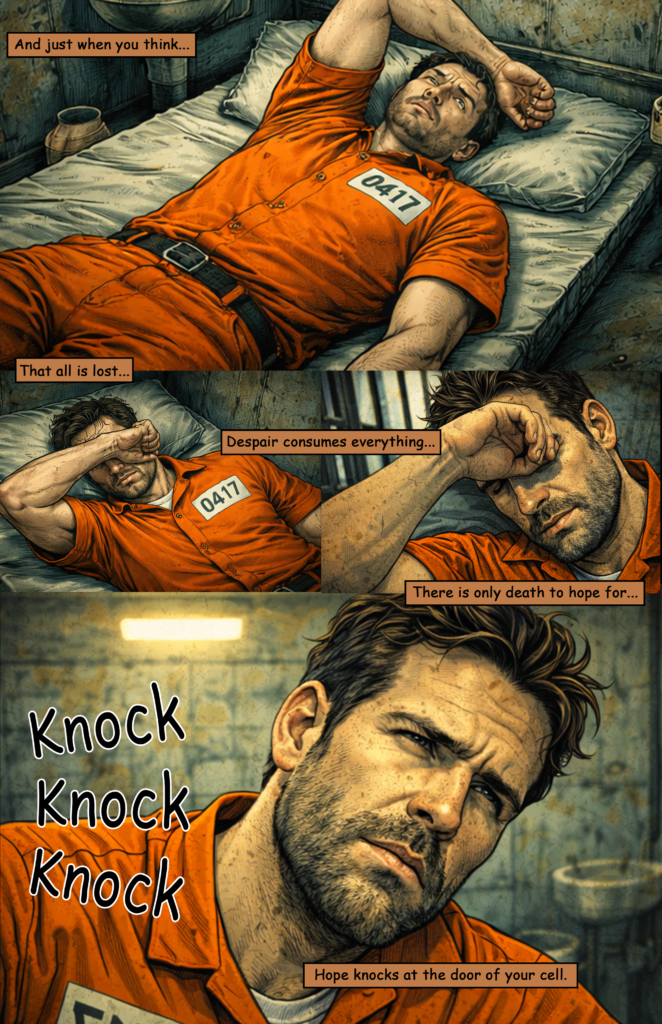

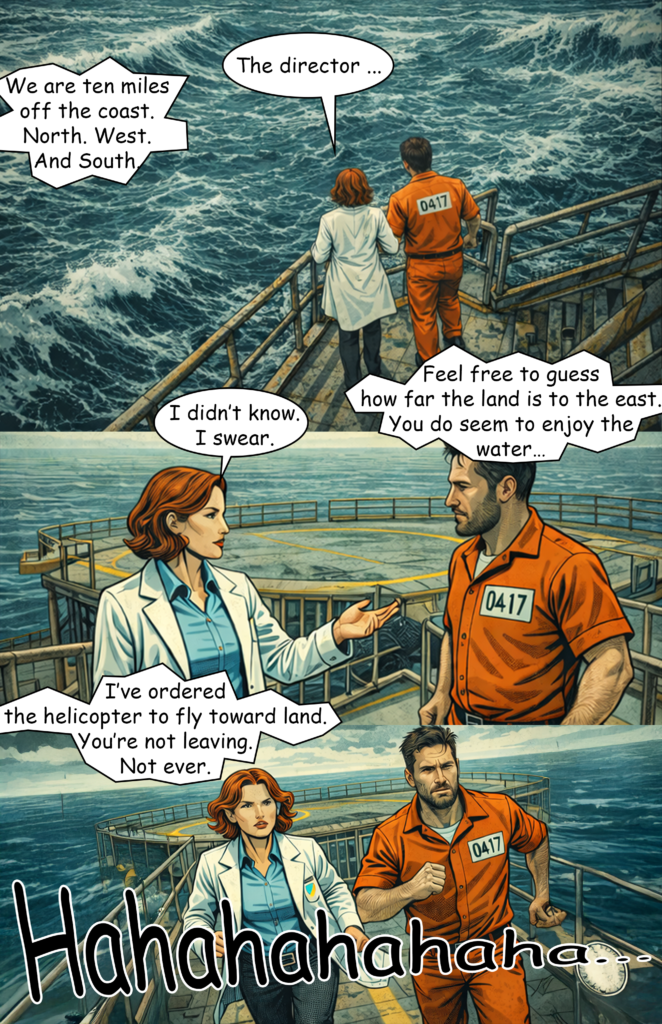

I created general “master prompts” for style and main characters, and even experimented with settings.

For example, “modern noir comic style” helps keep the visual style consistent and reduces style drift. The same applies to characters: “man in his 30s, short brown hair, grey hoodie”

Using these consistently improves overall coherence—although perfect consistency is still not possible.

Avoid Prompt Fading

If you add too many tokens to a prompt, the AI starts ignoring some of them—what I call “prompt fading.”

To avoid this, keep prompts short and focused. Use your master prompts, but limit scene descriptions to about 4–5 key elements and around 10 token max.

Let ChatGPT Refine Your Prompts

Describe your scene roughly and include your master prompts, then ask ChatGPT to generate a clean, concise version.

Most of the time, you can copy and paste that result directly for better outcomes than writing prompts manually.

Stay in the Same Chat

ChatGPT seems to retain context within a conversation, which can help with consistency if you generate all images in the same chat.

The downside is that long chats become slow and can glitch. This is something OpenAI should improve.

Accept Imperfection

Tools like Midjourney offer more control through adjustable parameters. ChatGPT doesn’t yet provide that level of precision.

Perfection isn’t achievable right now—so aim for “good enough” currently.

Work Around Slow Generation

Image generation is noticeably slower than with Midjourney.

A simple solution is to multitask: edit existing images in Photoshop (or another tool) while new ones are being generated.

Create Reference Sheets

Before starting, I generated text-based sheets for characters, settings, style, and actions.

Whenever ChatGPT lost consistency, I re-uploaded the relevant sheet to get it back on track (especially for characters).

Use a Color Token

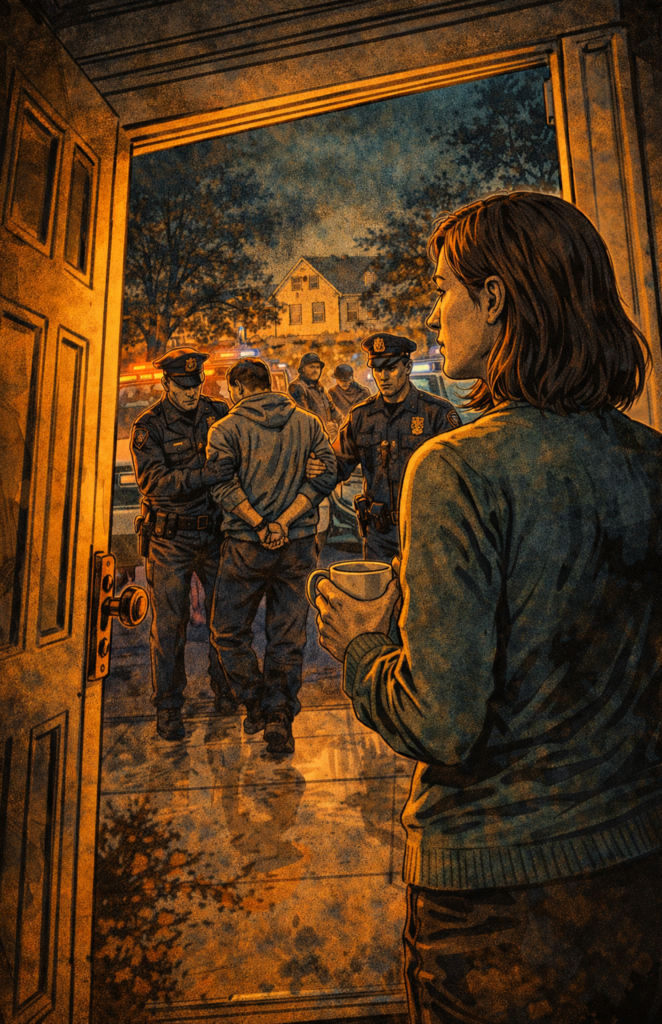

Adding a consistent color theme helps unify the look.

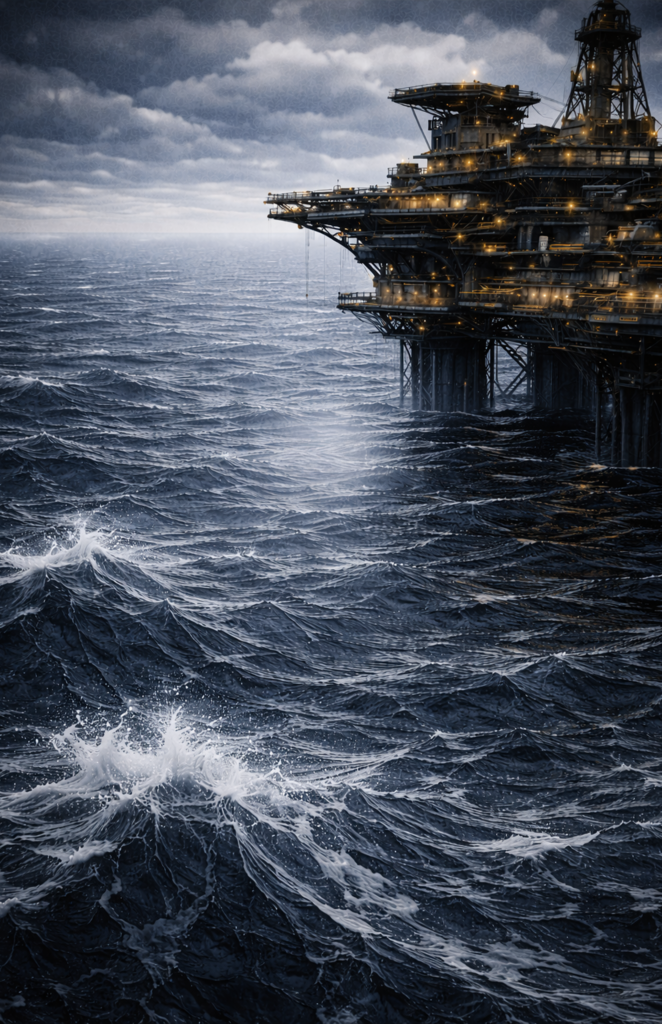

For example, I used “orange-teal palette” in every prompt, which made the entire comic feel visually cohesive.

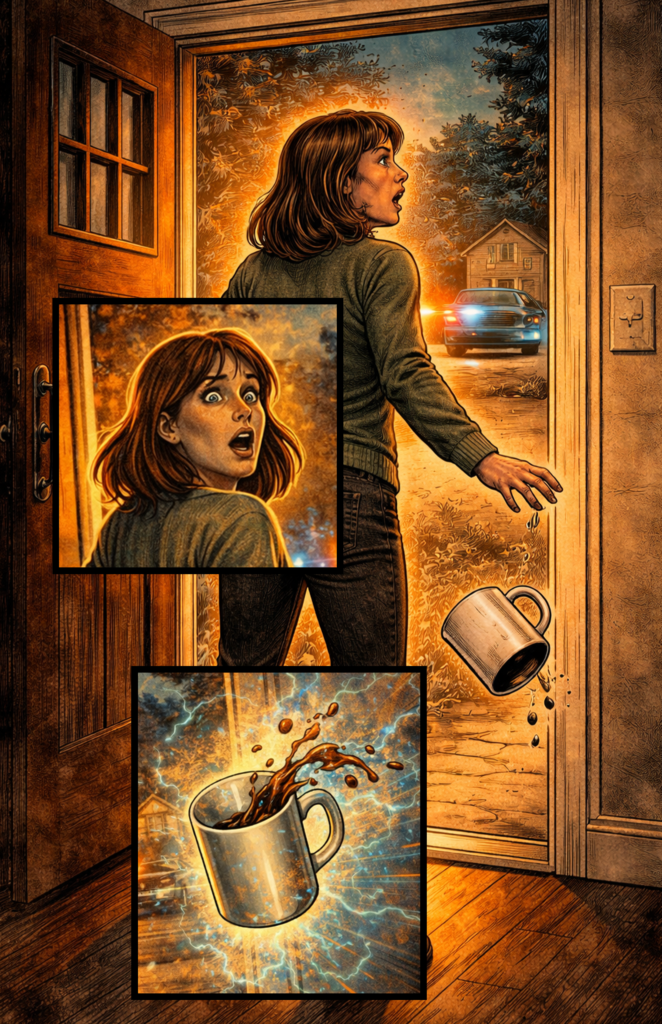

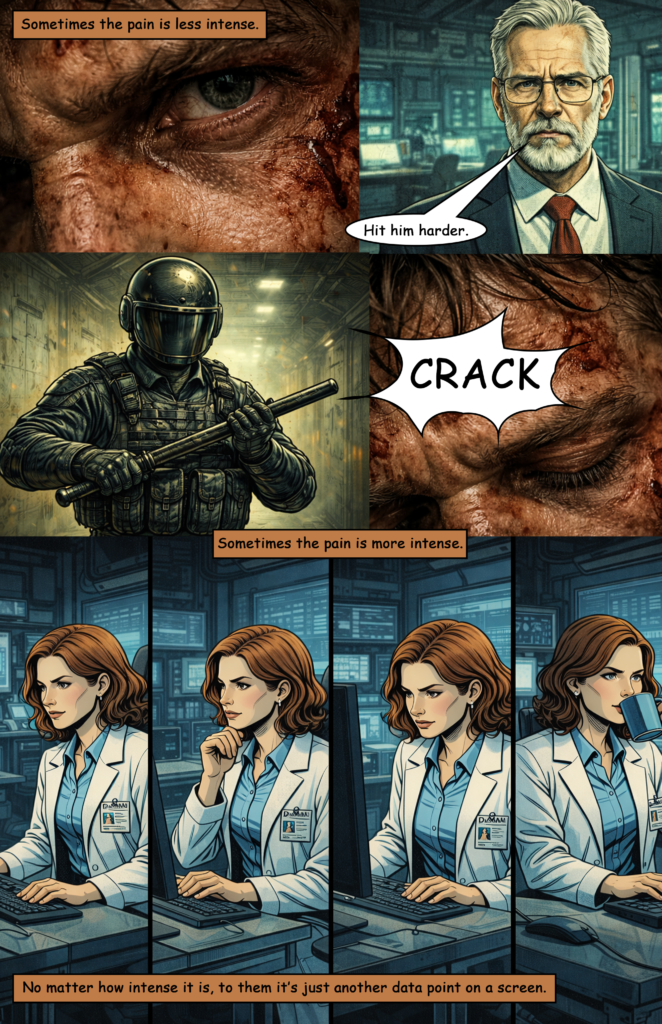

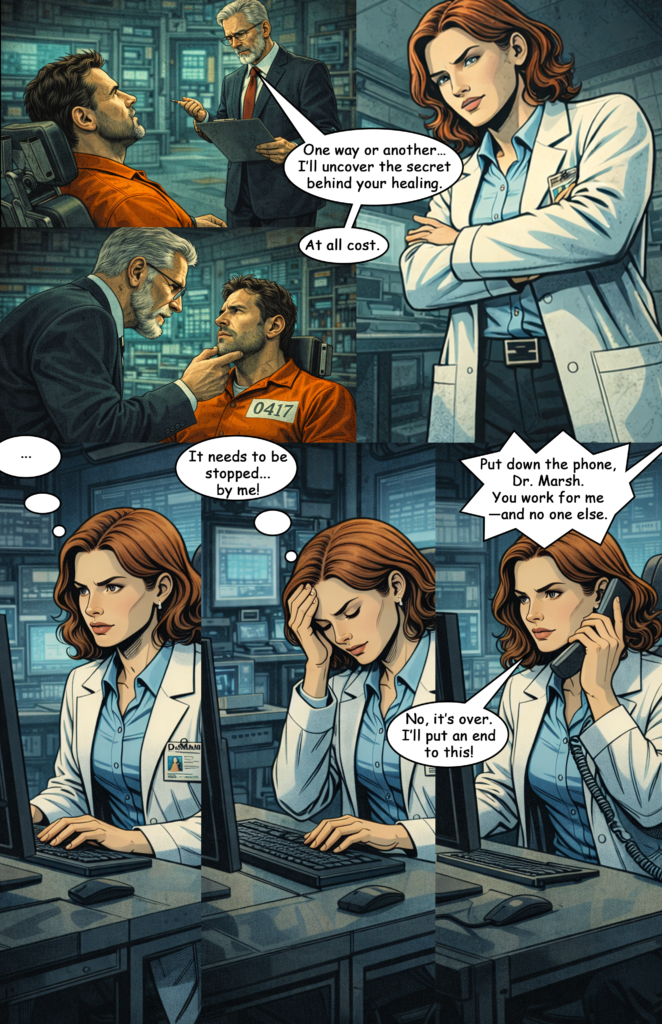

Generate Facial Close-Ups

Start by generating close-up facial expressions for each character. For example:

“man in his 30s, short brown hair, grey hoodie, angry, 4 images from different perspectives in one”

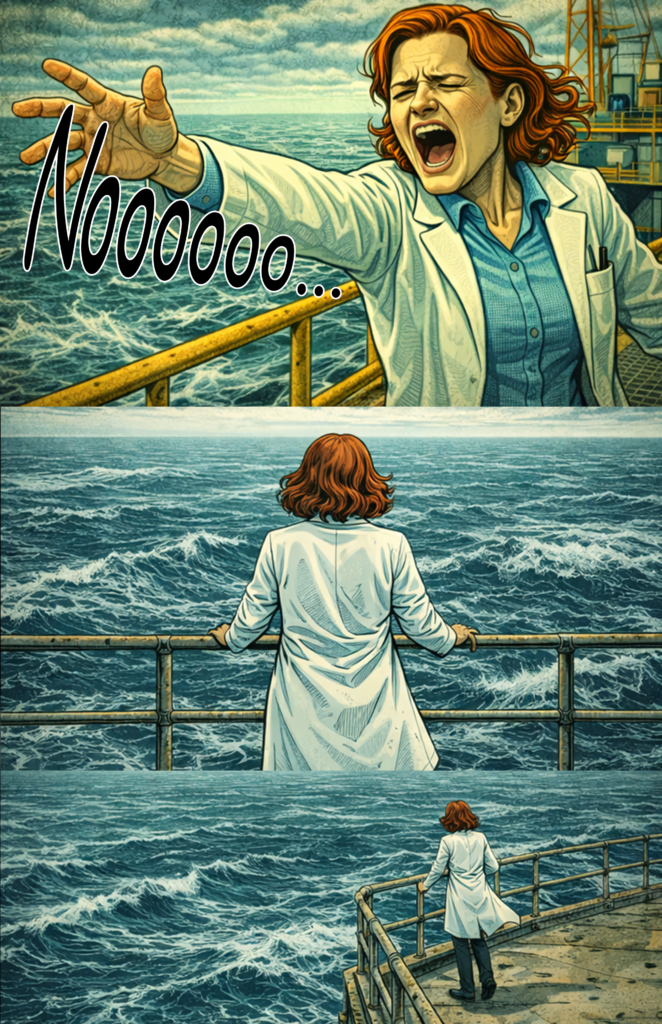

Do this for different emotions and characters. These images are useful for transitions between action scenes and as overlays.

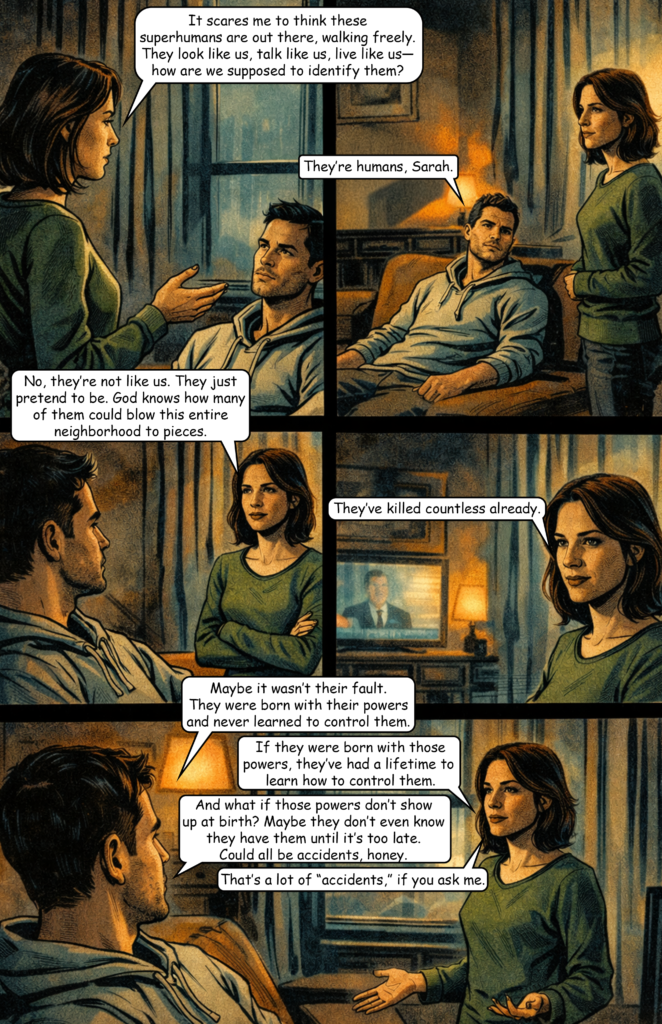

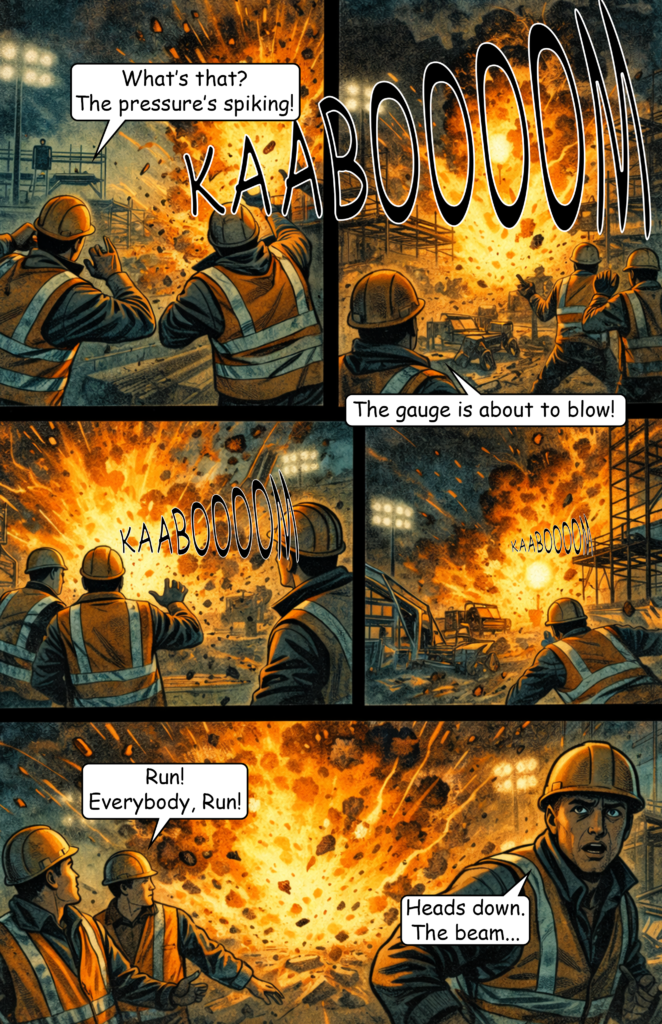

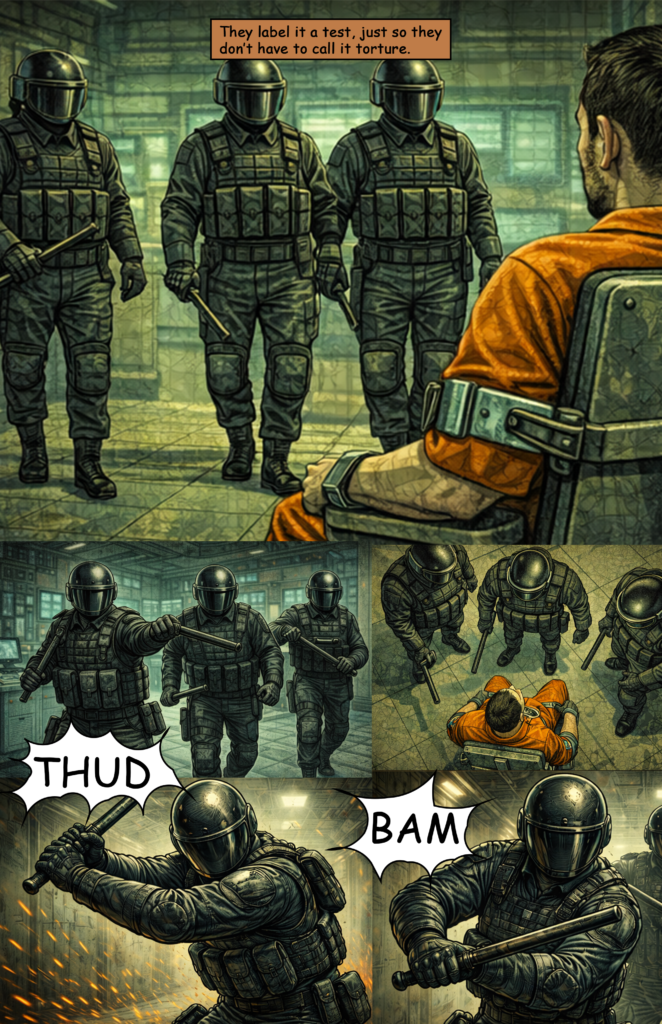

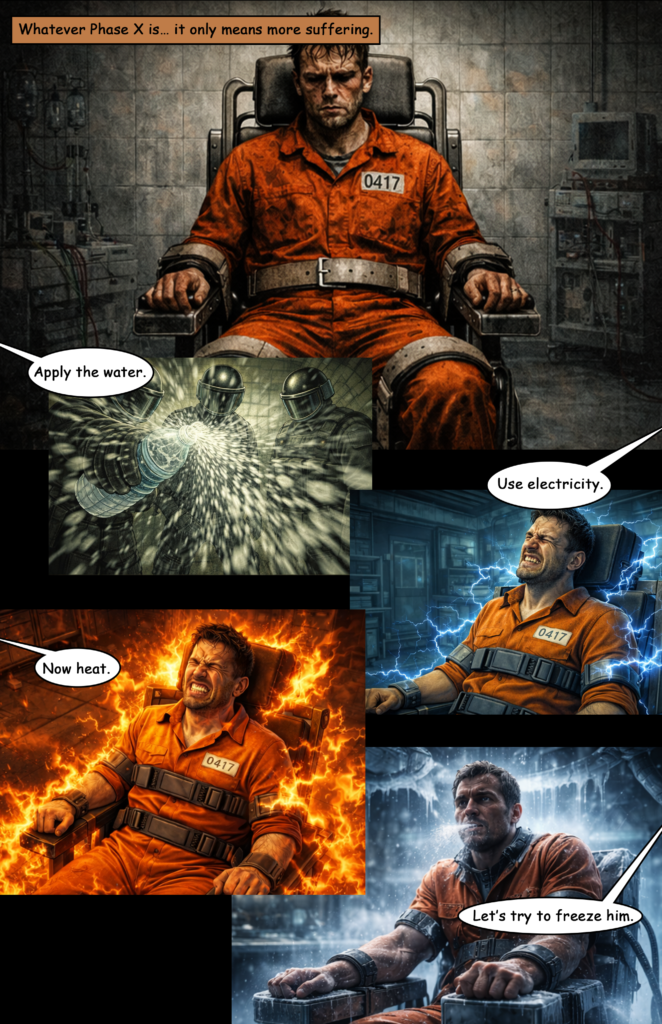

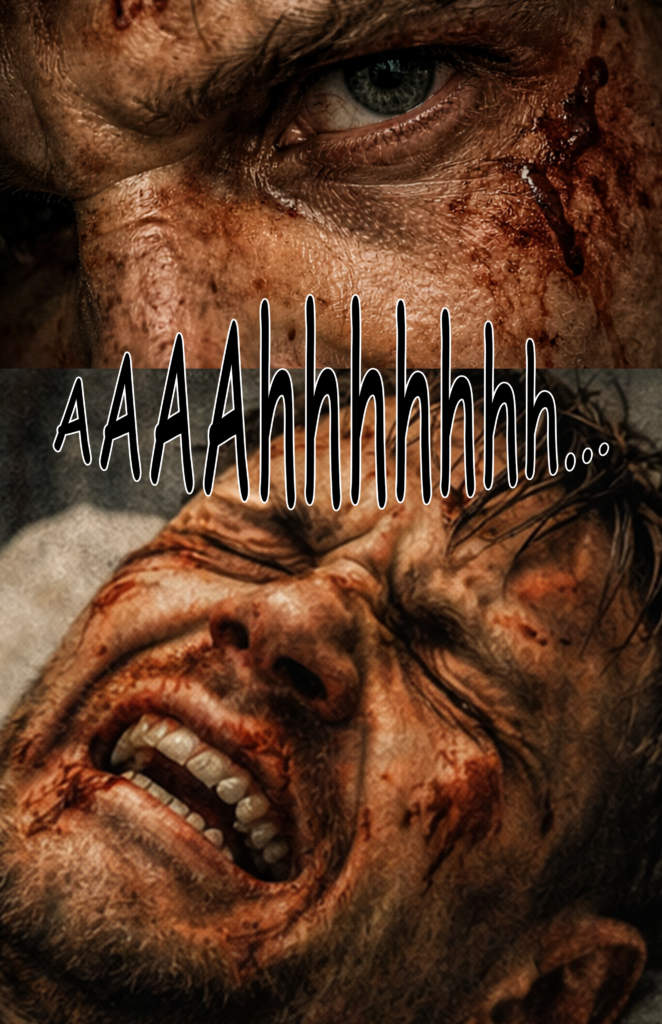

Avoid Violence

Violence gets flagged very easily. Prompts involving fighting, injury, or killing often won’t render.

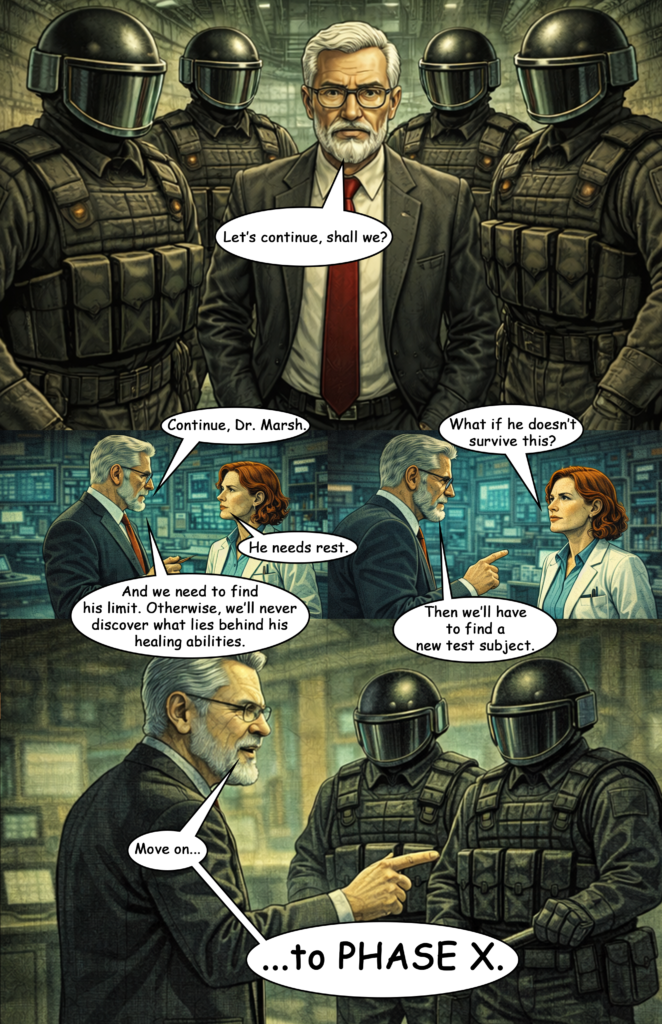

This is a major limitation—at the moment, you’re restricted to stories with minimal physical conflict.

Specify Aspect Ratio

Aspect ratio instructions are sometimes ignored, especially when using multi-image prompts.

Still, using terms like “horizontal shot” or “vertical shot” can help guide composition.

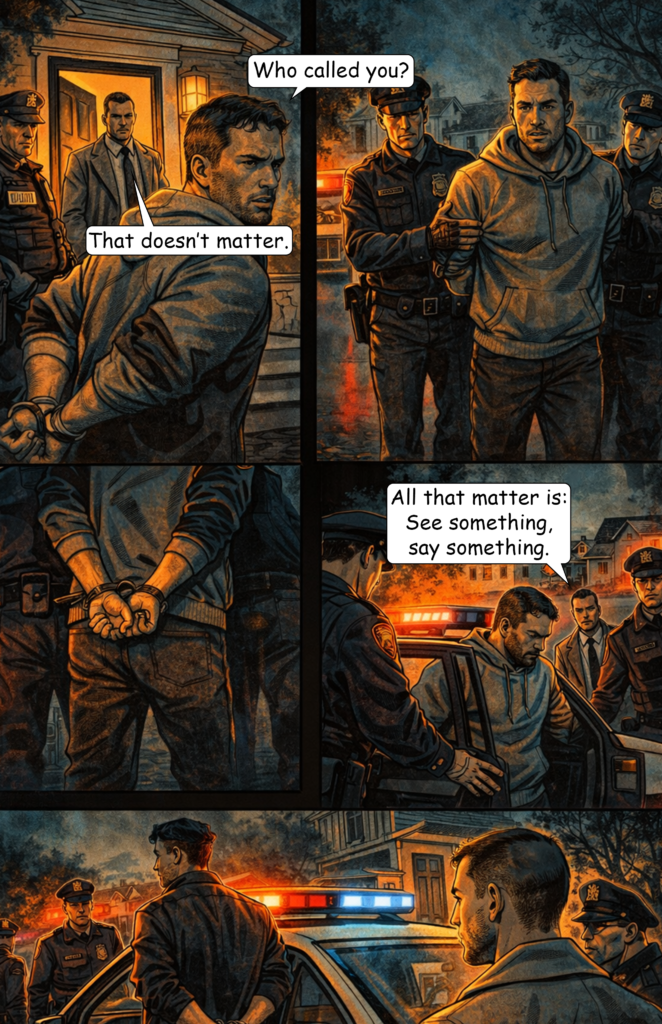

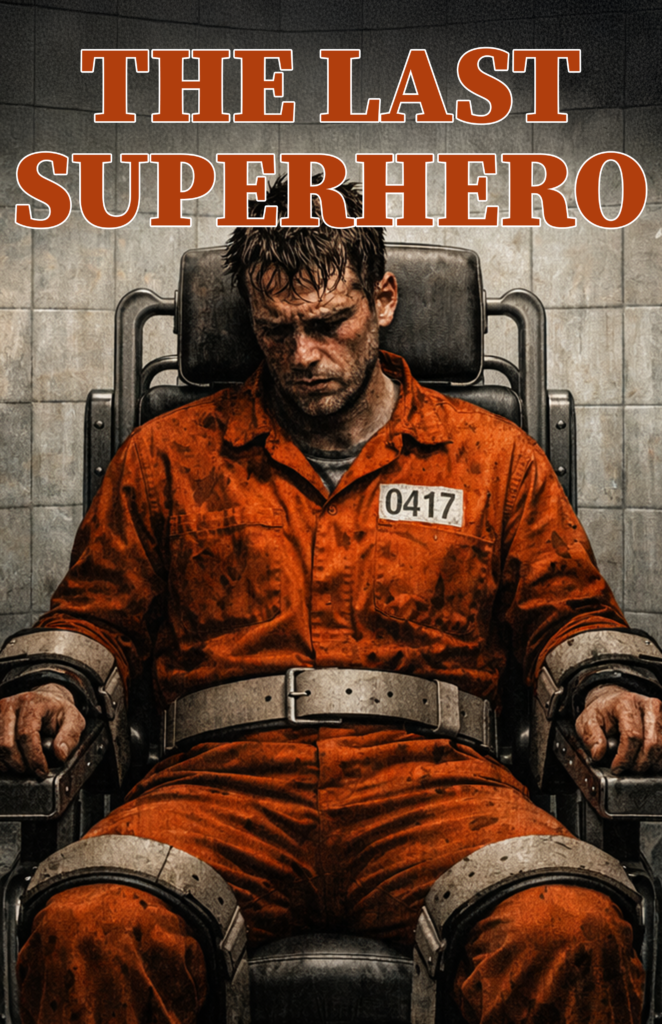

Handle Text Carefully

You can generate text within images, but results are inconsistent.

Sometimes the image looks good but the text is unusable—and sometimes the opposite. Because of this, I added dialogue later in Photoshop myself for better control.

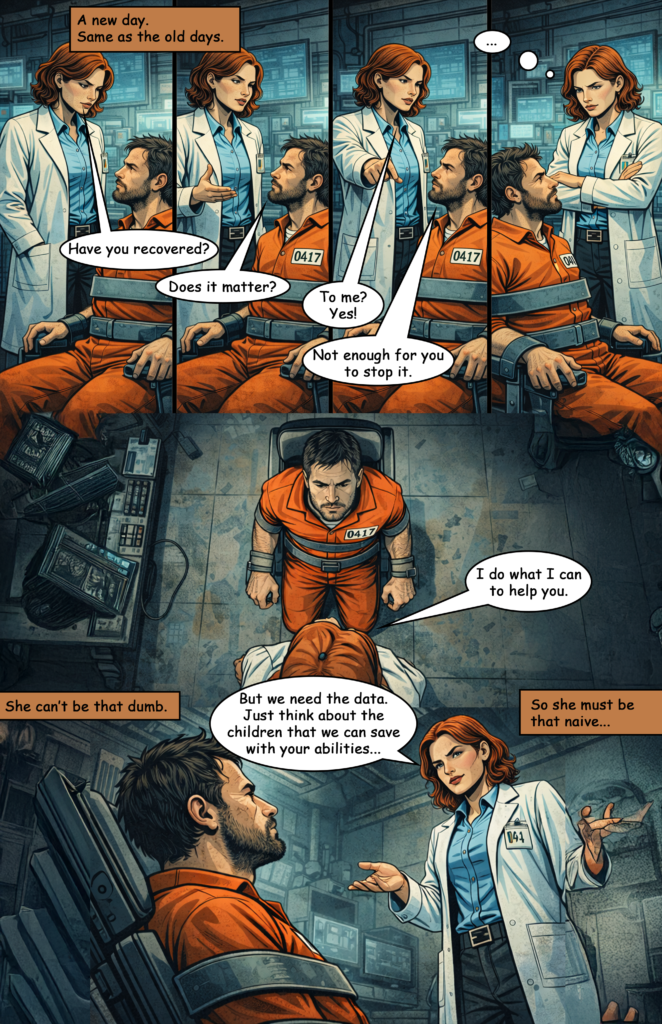

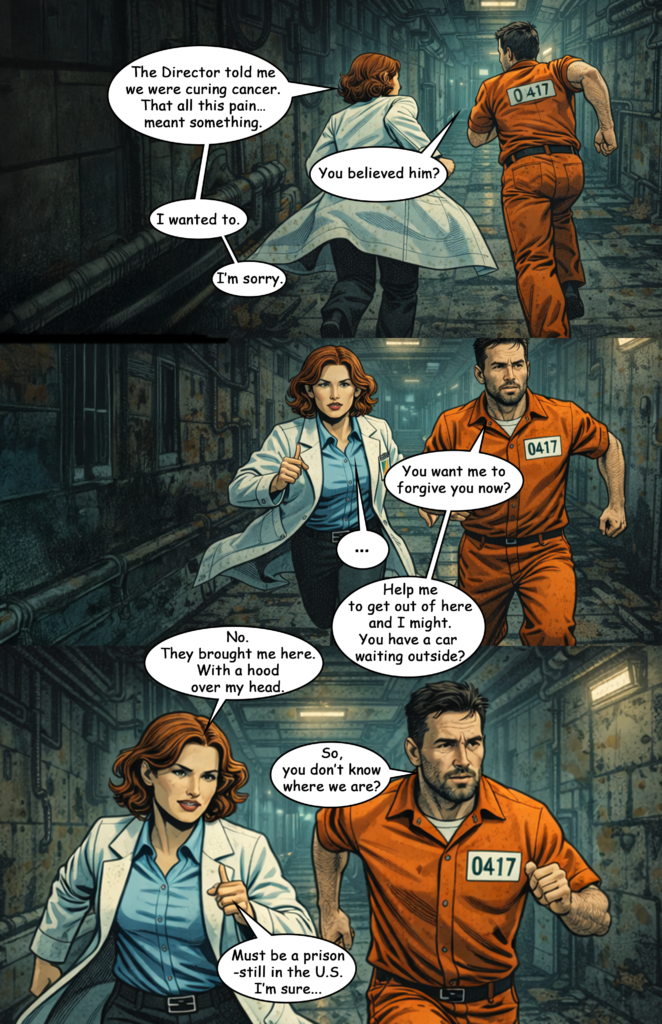

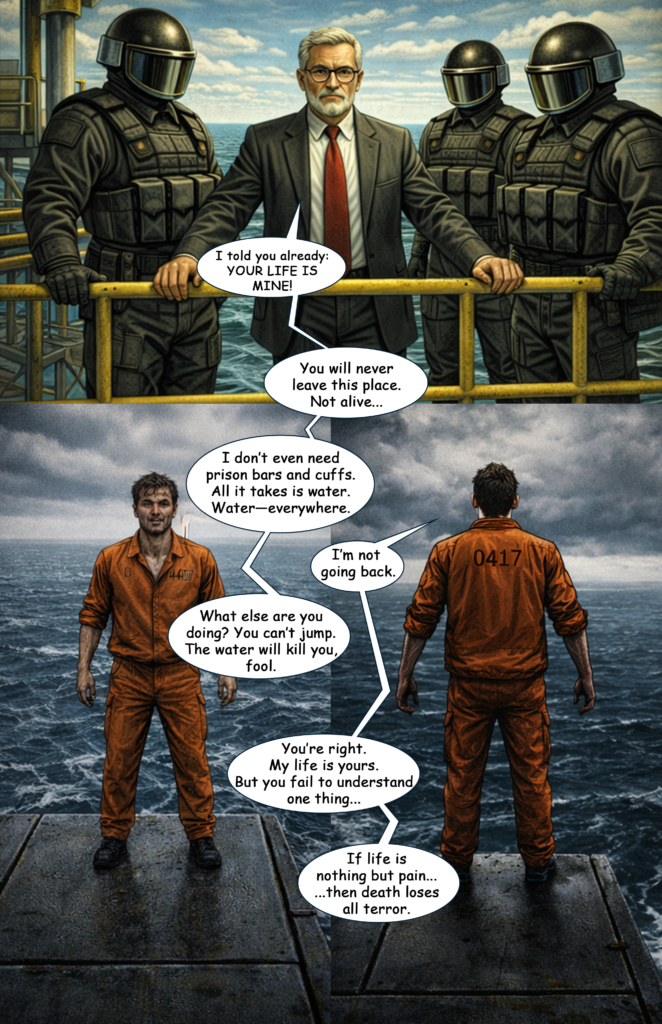

Interestingly, simple text elements within scenes (like “police” on a car or a number like “0417” on clothing) worked surprisingly well and reliable.

Prompt Example

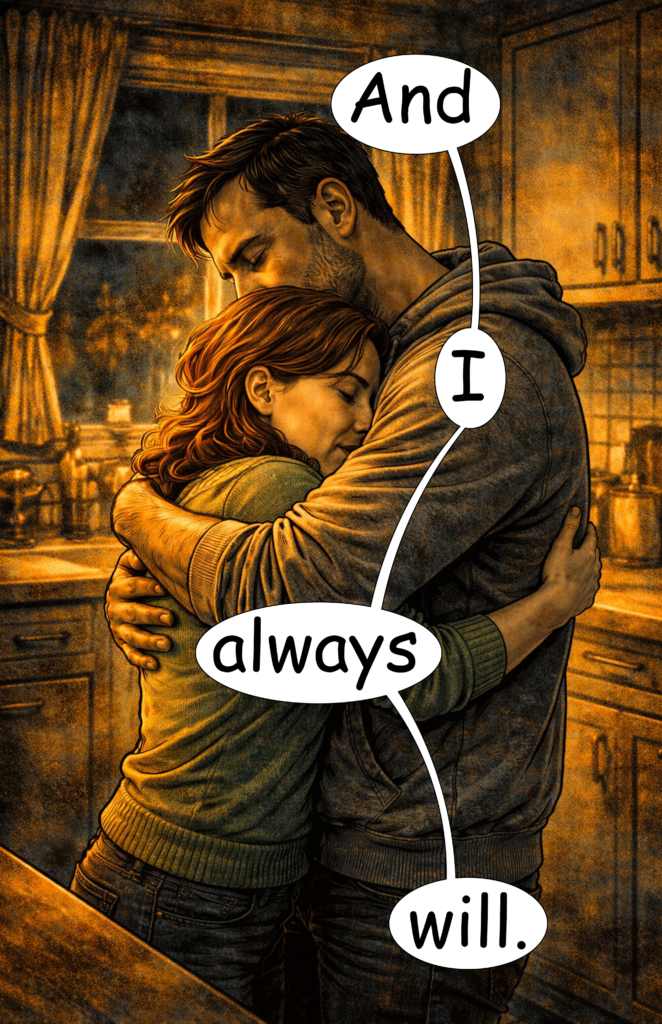

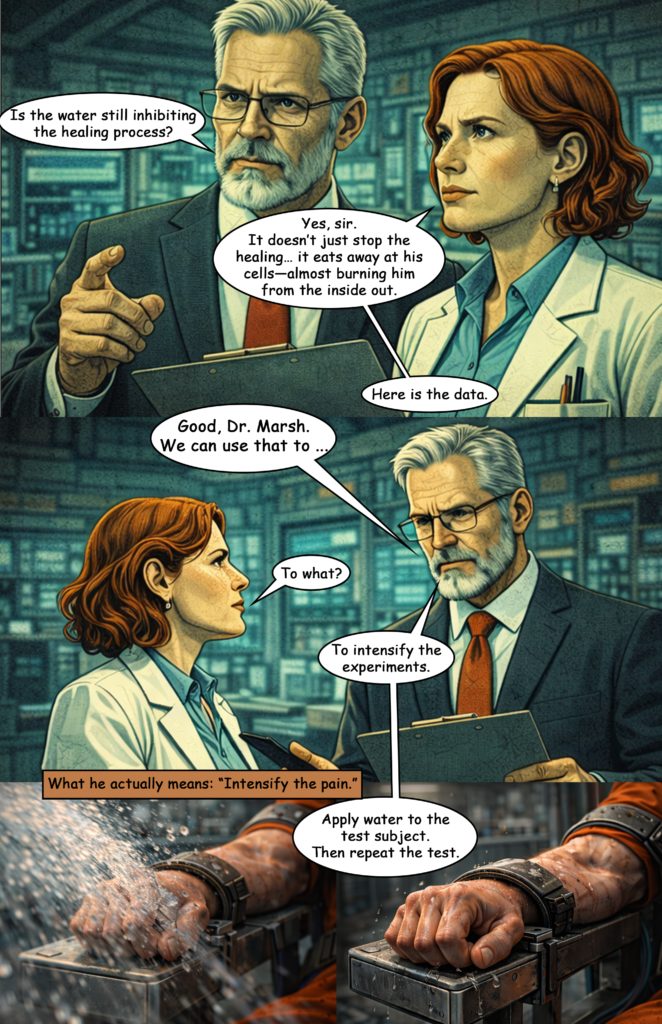

Here’s an example of a prompt I used:

- Style prompt: modern noir comic style, cinematic lighting, orange-teal palette, sharp ink lines, graphic novel page

- Multi-image prompt: panels layout with 4 perspective variations

- Character prompt: 30s man, short dark hair, light stubble, grey hoodie

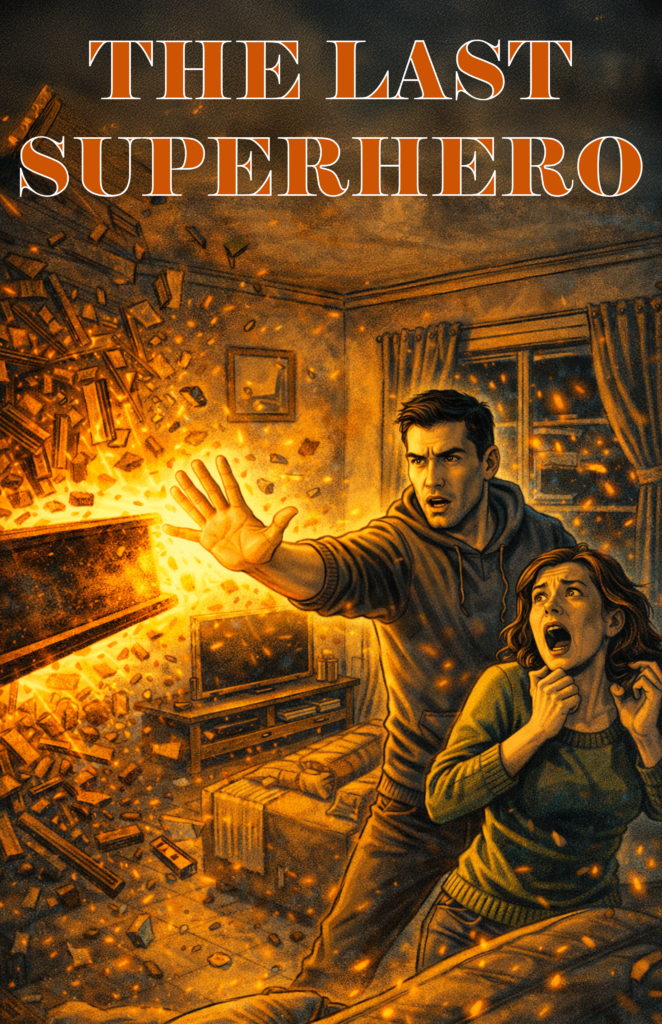

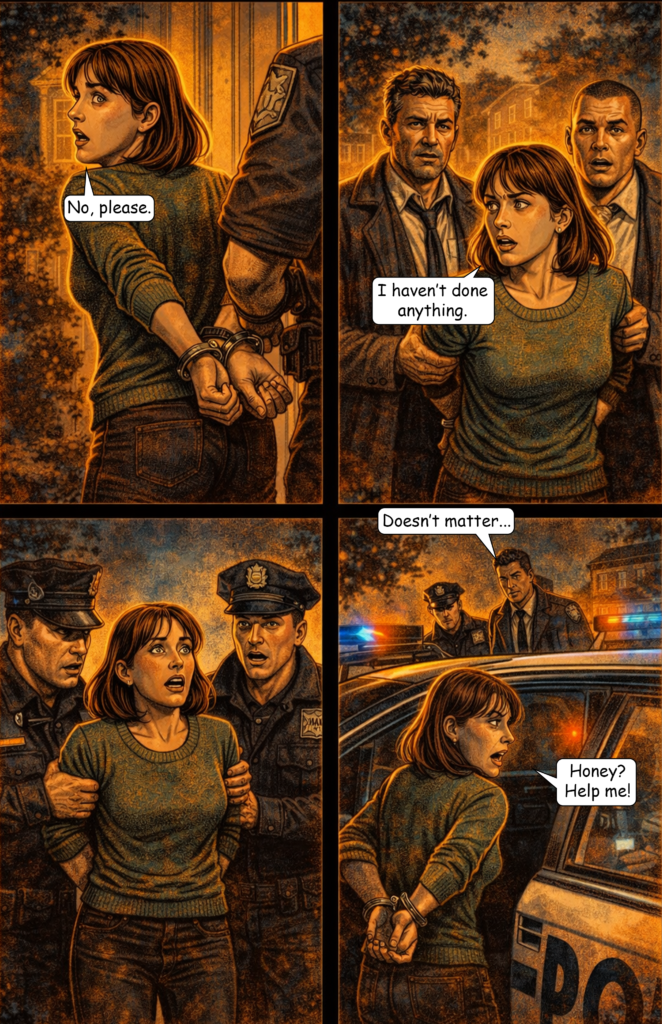

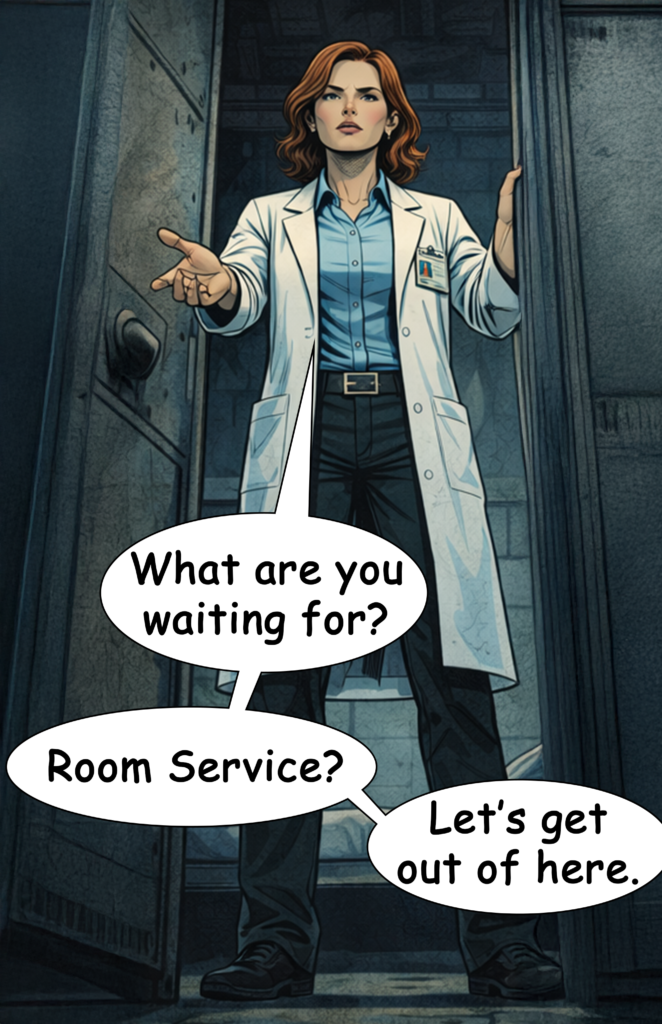

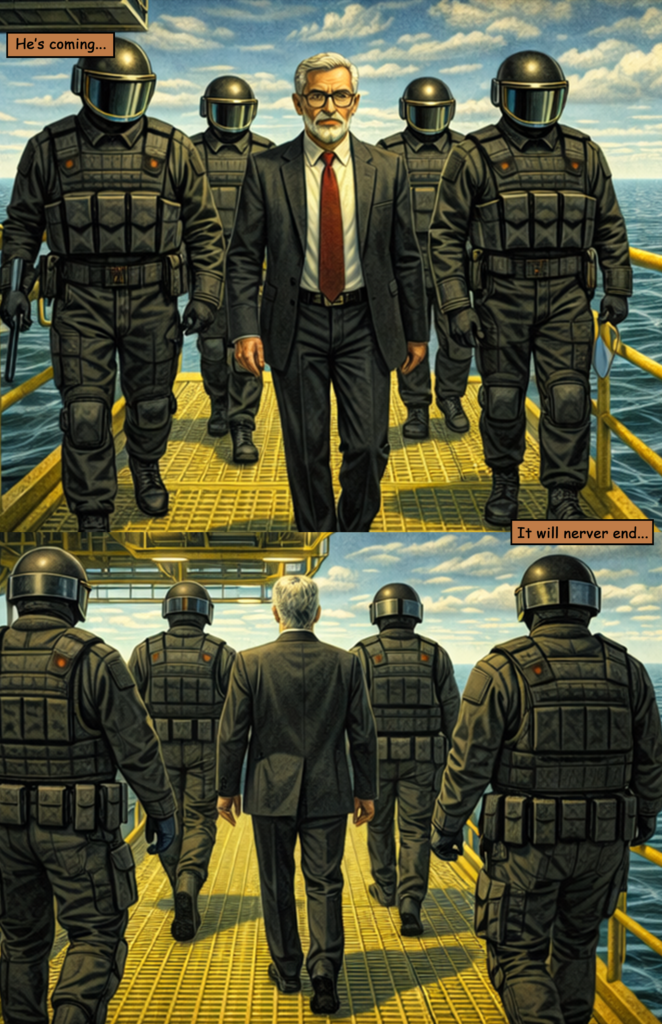

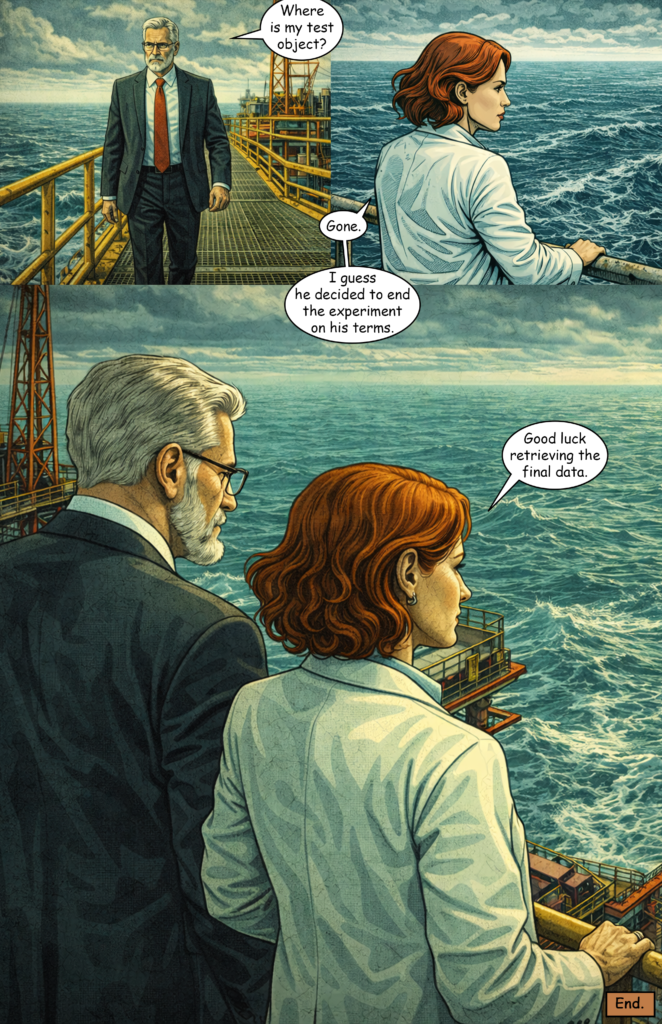

That is what I always added when the main character was part of a scene. Then I added the specific action for the scene. For example here is the final comic page:

- Scene prompt: standing, watching suburban street, police car in distance, flashing lights, man standing still in foreground, police car driving away in background, distant perspective, quiet, tense aftermath

Full prompt:

modern noir comic style, cinematic lighting, orange-teal palette, sharp ink lines, graphic novel page, panels layout with 4 perspective variations, 30s man, short dark hair, light stubble, grey hoodie, standing, watching suburban street, police car in distance, flashing lights, man standing still in foreground, police car driving away in background, distant perspective, quiet, tense aftermath

Conclusion

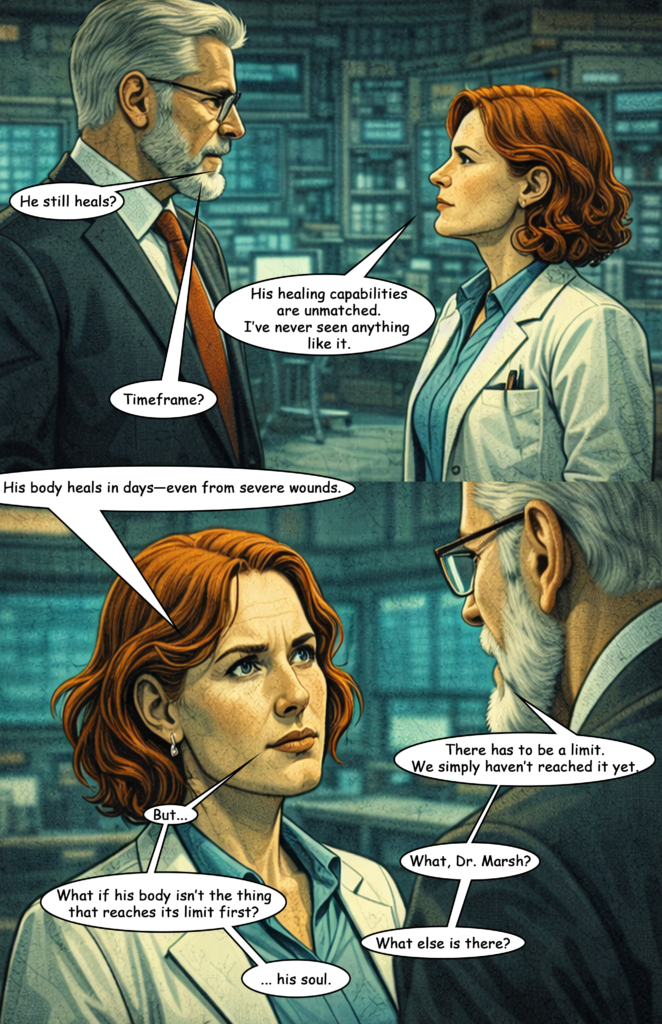

The results were clearly better than my first attempt—and in some ways even better than what Midjourney produced for me last year.

However, it’s still far from offering the creative freedom needed for storytelling.

The biggest issue is content restriction: you can include tension, but not real action. No fights, no violence. Since these elements are essential to many comic narratives, this severely limits storytelling potential.

I reached about 50–60% satisfaction only by designing a story with reduced action. As soon as action becomes central, satisfaction drops drastically.